Dataset Preview

The full dataset viewer is not available (click to read why). Only showing a preview of the rows.

The dataset generation failed

Error code: DatasetGenerationError

Exception: ArrowNotImplementedError

Message: Cannot write struct type 'flags' with no child field to Parquet. Consider adding a dummy child field.

Traceback: Traceback (most recent call last):

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/builder.py", line 1766, in _prepare_split_single

writer.write(example, key)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 500, in write

self.write_examples_on_file()

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 458, in write_examples_on_file

self.write_batch(batch_examples=batch_examples)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 572, in write_batch

self.write_table(pa_table, writer_batch_size)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 583, in write_table

self._build_writer(inferred_schema=pa_table.schema)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 404, in _build_writer

self.pa_writer = self._WRITER_CLASS(self.stream, schema)

File "/src/services/worker/.venv/lib/python3.9/site-packages/pyarrow/parquet/core.py", line 1010, in __init__

self.writer = _parquet.ParquetWriter(

File "pyarrow/_parquet.pyx", line 2157, in pyarrow._parquet.ParquetWriter.__cinit__

File "pyarrow/error.pxi", line 154, in pyarrow.lib.pyarrow_internal_check_status

File "pyarrow/error.pxi", line 91, in pyarrow.lib.check_status

pyarrow.lib.ArrowNotImplementedError: Cannot write struct type 'flags' with no child field to Parquet. Consider adding a dummy child field.

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/builder.py", line 1775, in _prepare_split_single

num_examples, num_bytes = writer.finalize()

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 599, in finalize

self.write_examples_on_file()

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 458, in write_examples_on_file

self.write_batch(batch_examples=batch_examples)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 572, in write_batch

self.write_table(pa_table, writer_batch_size)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 583, in write_table

self._build_writer(inferred_schema=pa_table.schema)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/arrow_writer.py", line 404, in _build_writer

self.pa_writer = self._WRITER_CLASS(self.stream, schema)

File "/src/services/worker/.venv/lib/python3.9/site-packages/pyarrow/parquet/core.py", line 1010, in __init__

self.writer = _parquet.ParquetWriter(

File "pyarrow/_parquet.pyx", line 2157, in pyarrow._parquet.ParquetWriter.__cinit__

File "pyarrow/error.pxi", line 154, in pyarrow.lib.pyarrow_internal_check_status

File "pyarrow/error.pxi", line 91, in pyarrow.lib.check_status

pyarrow.lib.ArrowNotImplementedError: Cannot write struct type 'flags' with no child field to Parquet. Consider adding a dummy child field.

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/src/services/worker/src/worker/job_runners/config/parquet_and_info.py", line 1529, in compute_config_parquet_and_info_response

parquet_operations = convert_to_parquet(builder)

File "/src/services/worker/src/worker/job_runners/config/parquet_and_info.py", line 1154, in convert_to_parquet

builder.download_and_prepare(

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/builder.py", line 1027, in download_and_prepare

self._download_and_prepare(

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/builder.py", line 1789, in _download_and_prepare

super()._download_and_prepare(

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/builder.py", line 1122, in _download_and_prepare

self._prepare_split(split_generator, **prepare_split_kwargs)

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/builder.py", line 1627, in _prepare_split

for job_id, done, content in self._prepare_split_single(

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/builder.py", line 1784, in _prepare_split_single

raise DatasetGenerationError("An error occurred while generating the dataset") from e

datasets.exceptions.DatasetGenerationError: An error occurred while generating the datasetNeed help to make the dataset viewer work? Make sure to review how to configure the dataset viewer, and open a discussion for direct support.

image image | version string | flags dict | shapes list | imagePath string | imageData null | imageHeight int64 | imageWidth int64 |

|---|---|---|---|---|---|---|---|

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

118,

135.66666666666669

],

[

118,

158.66666666666669

],

[

117,

158.66666666666669

],

[

117,

182.66666666666669

],

[

232,

182.66666666666669... | train_0001.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

180.94444444444446,

82.11111111111111

],

[

180.94444444444446,

94.11111111111111

],

[

217.94444444444446,

94.11111111111111

],

[

217.94444444444446,

82.11111111111111

... | train_0002.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

102.90909090909088,

149.18181818181816

],

[

102.90909090909088,

173.18181818181816

],

[

170.90909090909088,

173.18181818181816

],

[

170.90909090909088,

149.18181818181... | train_0003.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

129.88888888888889,

110.44444444444444

],

[

129.88888888888889,

124.44444444444444

],

[

167.88888888888889,

124.44444444444444

],

[

167.88888888888889,

110.44444444444... | train_0004.jpg | null | 1,535 | 1,104 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

383.86956521739125,

104

],

[

383.86956521739125,

121

],

[

856.8695652173913,

121

],

[

856.8695652173913,

104

]

],

"group_id": null,

"shape_type": "po... | train_0005.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

1091.3333333333335,

112.33333333333334

],

[

1091.3333333333335,

134.33333333333334

],

[

1037.3333333333335,

134.33333333333334

],

[

1037.3333333333335,

153.33333333333... | train_0006.jpg | null | 1,683 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

164.84615384615387,

113.70940170940172

],

[

164.84615384615387,

131.70940170940173

],

[

235.84615384615387,

131.70940170940173

],

[

235.84615384615387,

113.70940170940... | train_0007.jpg | null | 1,719 | 1,276 | |

4.5.6 | {} | [

{

"label": "Footer",

"points": [

[

1007.8333333333334,

1466.8333333333335

],

[

1007.8333333333334,

1481.8333333333335

],

[

1029.8333333333335,

1481.8333333333335

],

[

1029.8333333333335,

1466.8333333333... | train_0008.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

103.40740740740739,

150.59259259259258

],

[

103.40740740740739,

167.59259259259258

],

[

159.4074074074074,

167.59259259259258

],

[

159.4074074074074,

150.5925925925925... | train_0009.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

163.83333333333334,

156.5

],

[

163.83333333333334,

182.5

],

[

162.83333333333334,

182.5

],

[

162.83333333333334,

207.5

],

[

204.83333333333334,

... | train_0010.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

102.5,

80

],

[

102.5,

96

],

[

573.5,

96

],

[

573.5,

80

]

],

"group_id": null,

"shape_type": "polygon",

"flags": {}

},

{

"label": "Text",

... | train_0011.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

446.4144144144144,

89.58558558558558

],

[

446.4144144144144,

110.58558558558558

],

[

844.4144144144144,

110.58558558558558

],

[

844.4144144144144,

89.58558558558558

... | train_0012.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

165.42735042735043,

136.93162393162396

],

[

165.42735042735043,

152.93162393162396

],

[

329.42735042735046,

152.93162393162396

],

[

329.42735042735046,

136.93162393162... | train_0013.jpg | null | 1,789 | 1,275 | |

4.5.6 | {} | [

{

"label": "Text",

"points": [

[

245.55555555555554,

169.88888888888889

],

[

245.55555555555554,

214.88888888888889

],

[

248.55555555555554,

214.88888888888889

],

[

248.55555555555554,

279.8888888888889... | train_0014.jpg | null | 1,754 | 1,240 | |

4.2.9 | {} | [

{

"label": "Header",

"points": [

[

103.47191011235952,

150.4494382022472

],

[

103.47191011235952,

168.4494382022472

],

[

159.47191011235952,

168.4494382022472

],

[

159.47191011235952,

150.4494382022472

... | train_0015.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

135.16666666666669,

120.33333333333334

],

[

135.16666666666669,

134.33333333333334

],

[

190.16666666666669,

134.33333333333334

],

[

190.16666666666669,

120.33333333333... | train_0016.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

491.2592592592591,

117

],

[

491.2592592592591,

136

],

[

1054.2592592592591,

136

],

[

1054.2592592592591,

117

]

],

"group_id": null,

"shape_type": "po... | train_0017.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

539.5909090909091,

149.13636363636363

],

[

539.5909090909091,

167.13636363636363

],

[

702.5909090909091,

167.13636363636363

],

[

702.5909090909091,

149.13636363636363

... | train_0018.jpg | null | 1,754 | 1,240 | |

4.2.9 | {} | [

{

"label": "Header",

"points": [

[

103.31034482758622,

121.51724137931035

],

[

103.31034482758622,

144.51724137931035

],

[

117.31034482758622,

144.51724137931035

],

[

117.31034482758622,

165.51724137931... | train_0019.jpg | null | 1,754 | 1,240 | |

4.2.9 | {} | [

{

"label": "Title",

"points": [

[

105.97938144329896,

1311.1546391752577

],

[

105.97938144329896,

1334.1546391752577

],

[

342.97938144329896,

1334.1546391752577

],

[

342.97938144329896,

1311.15463917525... | train_0020.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

104.18518518518516,

152.14814814814815

],

[

104.18518518518516,

164.14814814814815

],

[

123.18518518518516,

164.14814814814815

],

[

123.18518518518516,

152.14814814814... | train_0021.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

119.83333333333334,

93.16666666666667

],

[

119.83333333333334,

110.16666666666667

],

[

391.83333333333337,

110.16666666666667

],

[

391.83333333333337,

93.1666666666666... | train_0022.jpg | null | 1,683 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

103.1951219512195,

121.6341463414634

],

[

103.1951219512195,

144.6341463414634

],

[

117.1951219512195,

144.6341463414634

],

[

117.1951219512195,

166.6341463414634

... | train_0023.jpg | null | 1,754 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

180.33333333333334,

154.33333333333334

],

[

180.33333333333334,

170.33333333333334

],

[

308.33333333333337,

170.33333333333334

],

[

308.33333333333337,

154.33333333333... | train_0024.jpg | null | 1,683 | 1,240 | |

4.5.6 | {} | [

{

"label": "Header",

"points": [

[

510.2380952380953,

92.42857142857143

],

[

510.2380952380953,

112.42857142857143

],

[

759.2380952380953,

112.42857142857143

],

[

759.2380952380953,

92.42857142857143

... | train_0025.jpg | null | 1,754 | 1,240 |

End of preview.

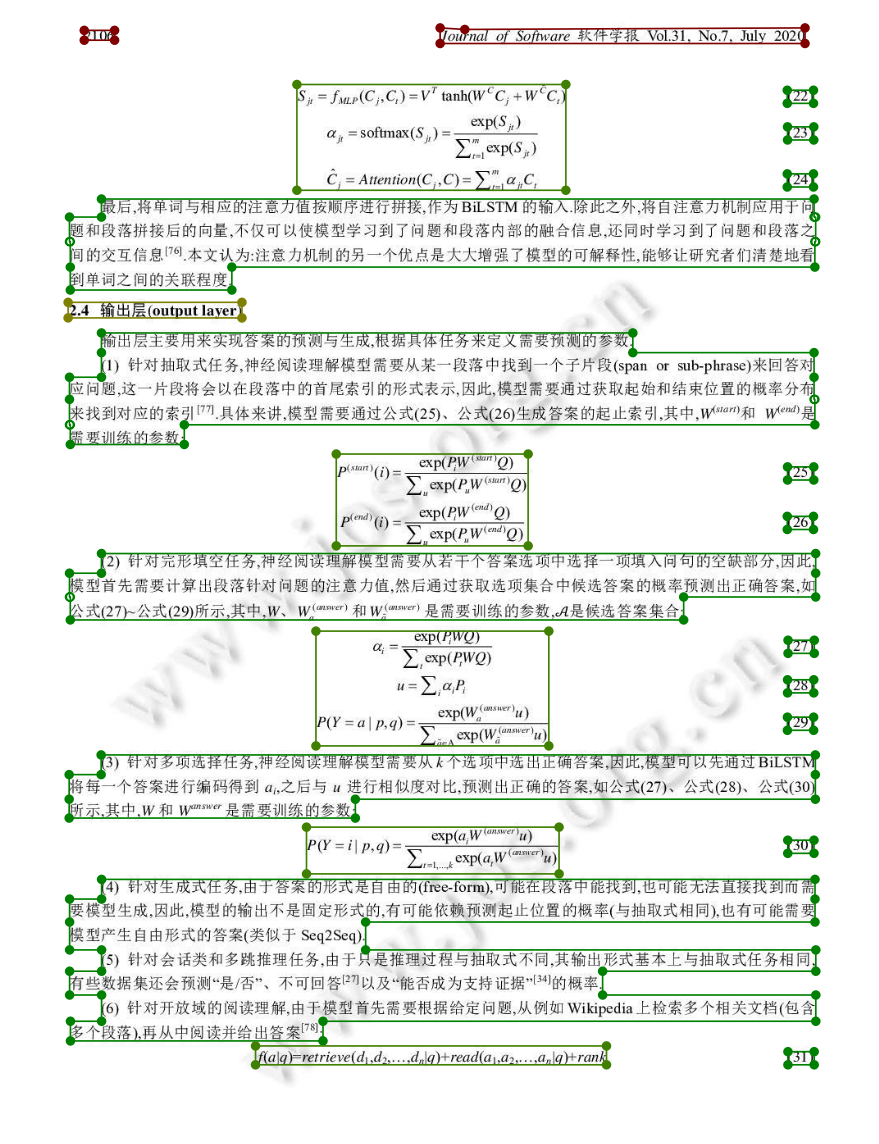

CDLA: A Chinese document layout analysis (CDLA) dataset

介绍

CDLA是一个中文文档版面分析数据集,面向中文文献类(论文)场景。包含以下10个label:

| 正文 | 标题 | 图片 | 图片标题 | 表格 | 表格标题 | 页眉 | 页脚 | 注释 | 公式 |

|---|---|---|---|---|---|---|---|---|---|

| Text | Title | Figure | Figure caption | Table | Table caption | Header | Footer | Reference | Equation |

共包含5000张训练集和1000张验证集,分别在train和val目录下。

整理自:CDLA

使用方式

from datasets import load_dataset

dataset = load_dataset("SWHL/CDLA")

train_data = dataset["train"]

print(train_data[0])

val_data = dataset["validation"]

print(val_data[0])

# {'image': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=1240x1754 at 0x12FEE3DF0>,

# 'version': '4.5.6', 'flags': {},

# 'shapes': [

# {'label': 'Header', 'points': [[118.0, 135.66666666666669]], 'group_id': None, 'shape_type': 'polygon', 'flags': {}}

# ],

# 'imagePath': 'train_0001.jpg', 'imageData': None, 'imageHeight': 1754, 'imageWidth': 1240}

下载链接

标注格式

我们的标注工具是labelme,所以标注格式和labelme格式一致。这里说明一下比较重要的字段:

shapes: shapes字段是一个list,里面有多个dict,每个dict代表一个标注实例。labels: 类别。points: 实例标注。因为我们的标注是Polygon形式,所以points里的坐标数量可能大于4。shape_type: "polygon"imagePath: 图片路径/名imageHeight: 高imageWidth: 宽

展示一个完整的标注样例:

{

"version":"4.5.6",

"flags":{},

"shapes":[

{

"label":"Title",

"points":[

[

553.1111111111111,

166.59259259259258

],

[

553.1111111111111,

198.59259259259258

],

[

686.1111111111111,

198.59259259259258

],

[

686.1111111111111,

166.59259259259258

]

],

"group_id":null,

"shape_type":"polygon",

"flags":{}

},

{

"label":"Text",

"points":[

[

250.5925925925925,

298.0740740740741

],

[

250.5925925925925,

345.0740740740741

],

[

188.5925925925925,

345.0740740740741

],

[

188.5925925925925,

410.0740740740741

],

[

188.5925925925925,

456.0740740740741

],

[

324.5925925925925,

456.0740740740741

],

[

324.5925925925925,

410.0740740740741

],

[

1051.5925925925926,

410.0740740740741

],

[

1051.5925925925926,

345.0740740740741

],

[

1052.5925925925926,

345.0740740740741

],

[

1052.5925925925926,

298.0740740740741

]

],

"group_id":null,

"shape_type":"polygon",

"flags":{}

},

{

"label":"Footer",

"points":[

[

1033.7407407407406,

1634.5185185185185

],

[

1033.7407407407406,

1646.5185185185185

],

[

1052.7407407407406,

1646.5185185185185

],

[

1052.7407407407406,

1634.5185185185185

]

],

"group_id":null,

"shape_type":"polygon",

"flags":{}

}

],

"imagePath":"val_0031.jpg",

"imageData":null,

"imageHeight":1754,

"imageWidth":1240

}

转COCO格式

# train

python3 labelme2coco.py CDLA_dir/train train_save_path --labels labels.txt

# val

python3 labelme2coco.py CDLA_dir/val val_save_path --labels labels.txt

转换结果保存在train_save_path/val_save_path目录下。

labelme2coco.py取自labelme,更多信息请参考labelme官方项目

- Downloads last month

- 65