Corsican - Wikilangs Models

Comprehensive Research Report & Full Ablation Study

This repository contains NLP models trained and evaluated by Wikilangs, specifically on Corsican Wikipedia data. We analyze tokenizers, n-gram models, Markov chains, vocabulary statistics, and word embeddings.

📋 Repository Contents

Models & Assets

- Tokenizers (8k, 16k, 32k, 64k)

- N-gram models (2, 3, 4, 5-gram)

- Markov chains (context of 1, 2, 3, 4 and 5)

- Subword N-gram and Markov chains

- Embeddings in various sizes and dimensions (aligned and unaligned)

- Language Vocabulary

- Language Statistics

Analysis and Evaluation

- 1. Tokenizer Evaluation

- 2. N-gram Model Evaluation

- 3. Markov Chain Evaluation

- 4. Vocabulary Analysis

- 5. Word Embeddings Evaluation

- 6. Morphological Analysis (Experimental)

- 7. Summary & Recommendations

- Metrics Glossary

- Visualizations Index

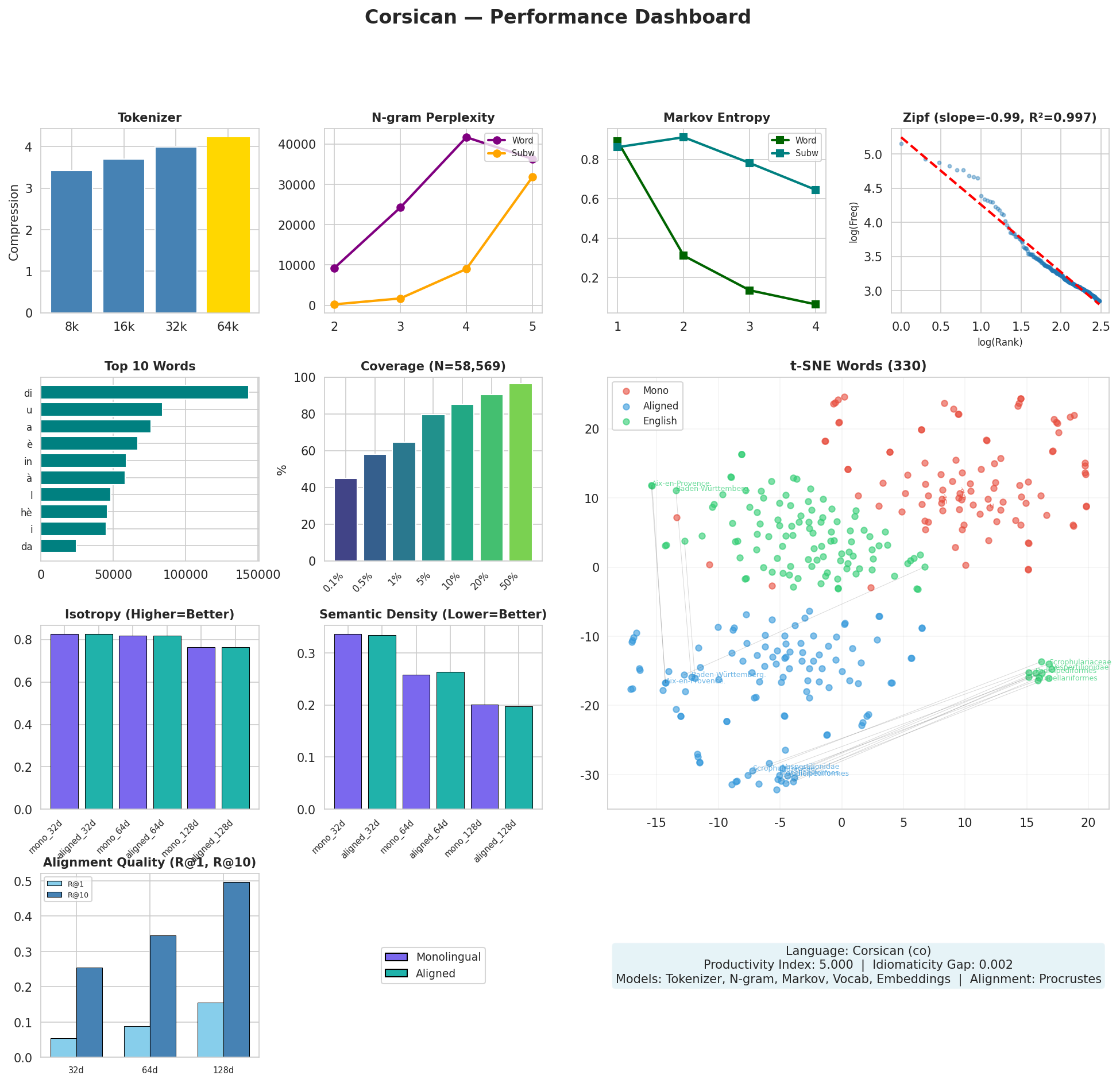

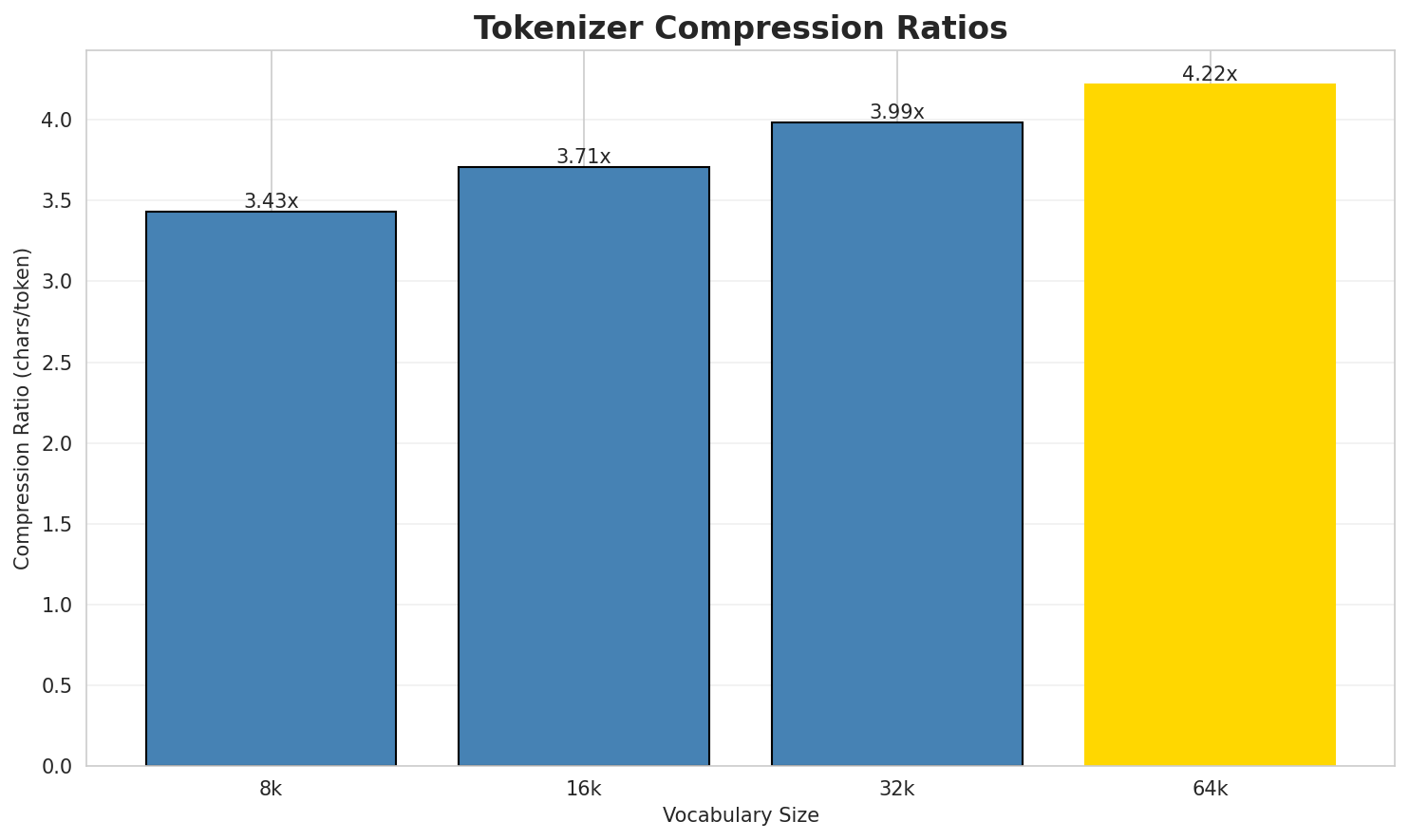

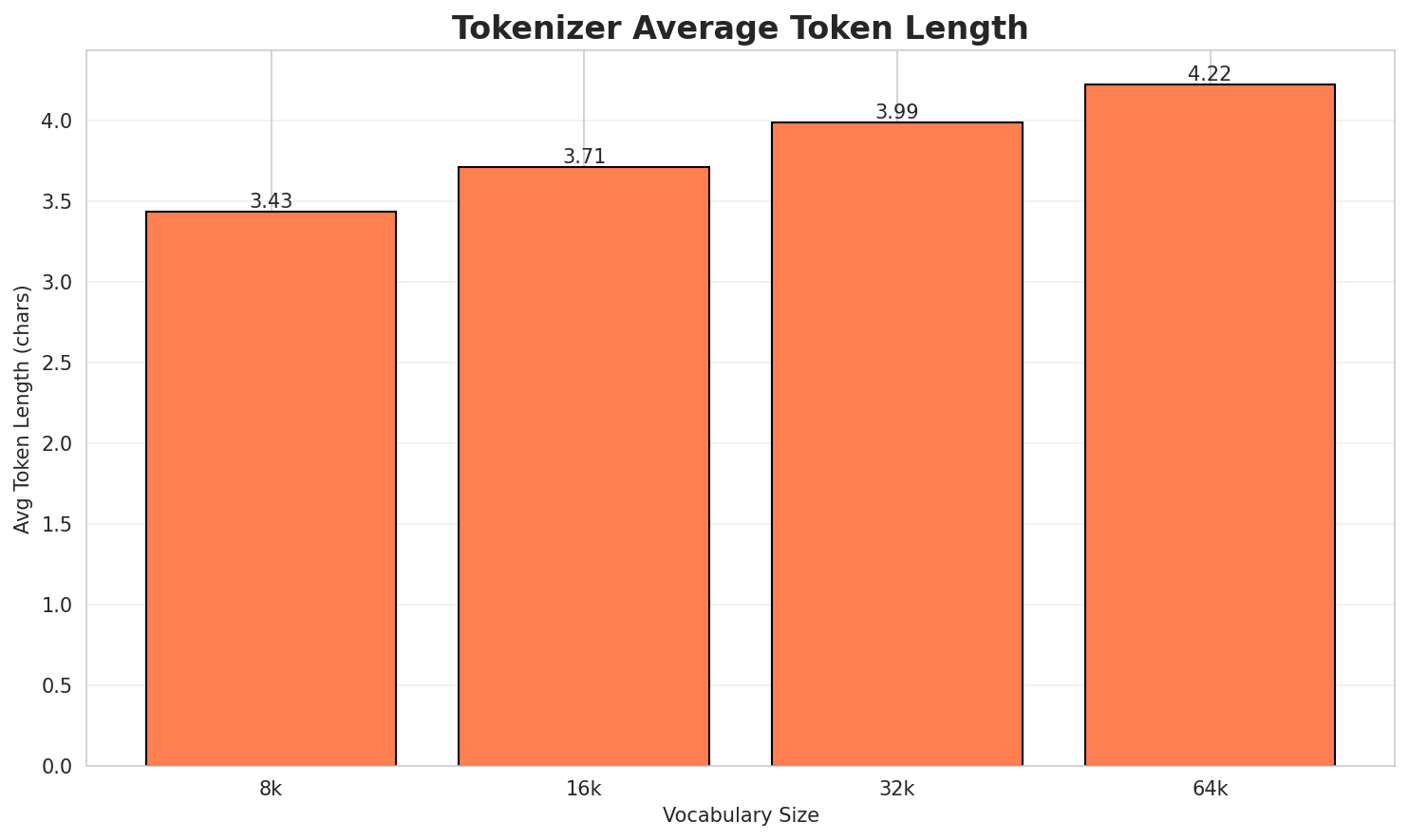

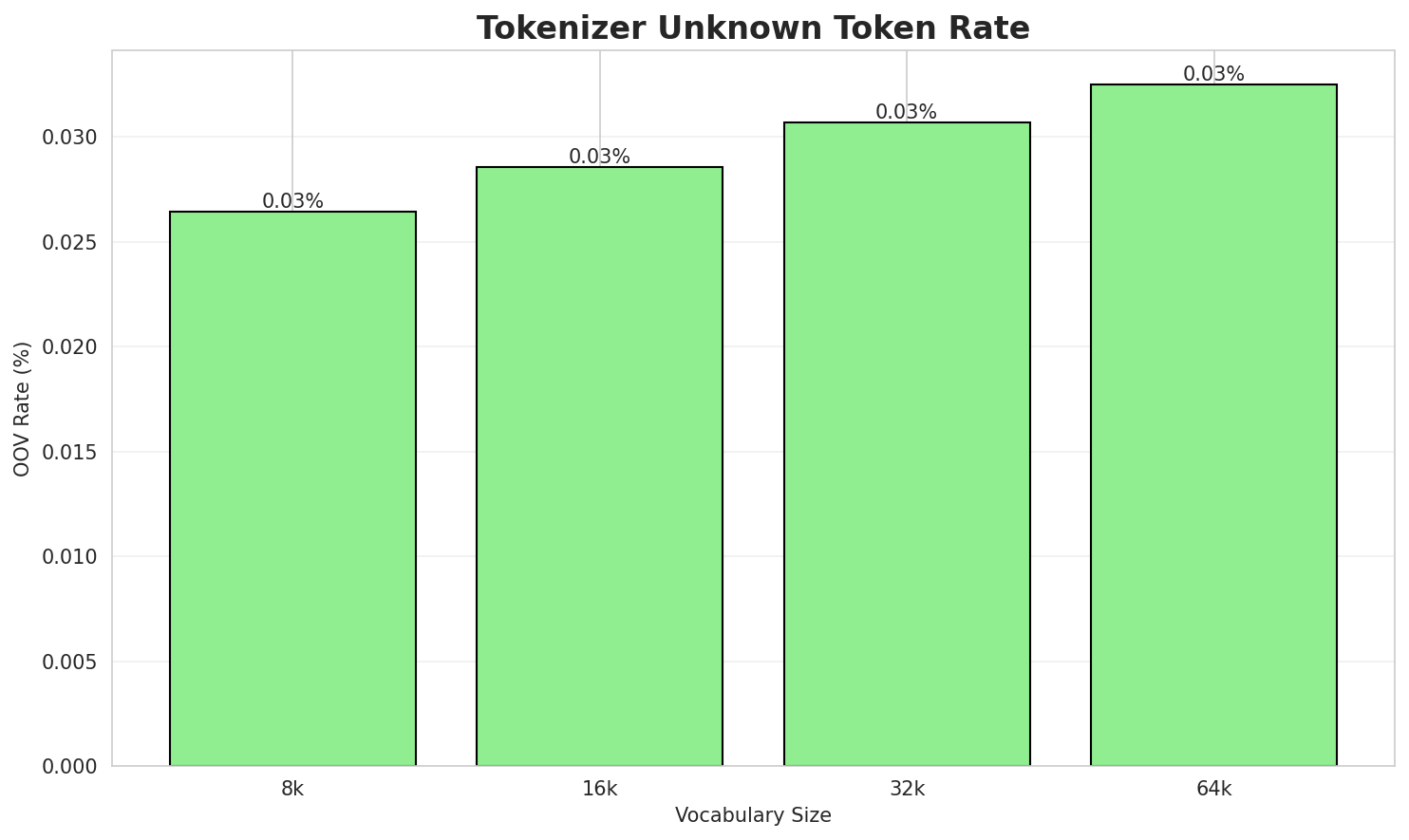

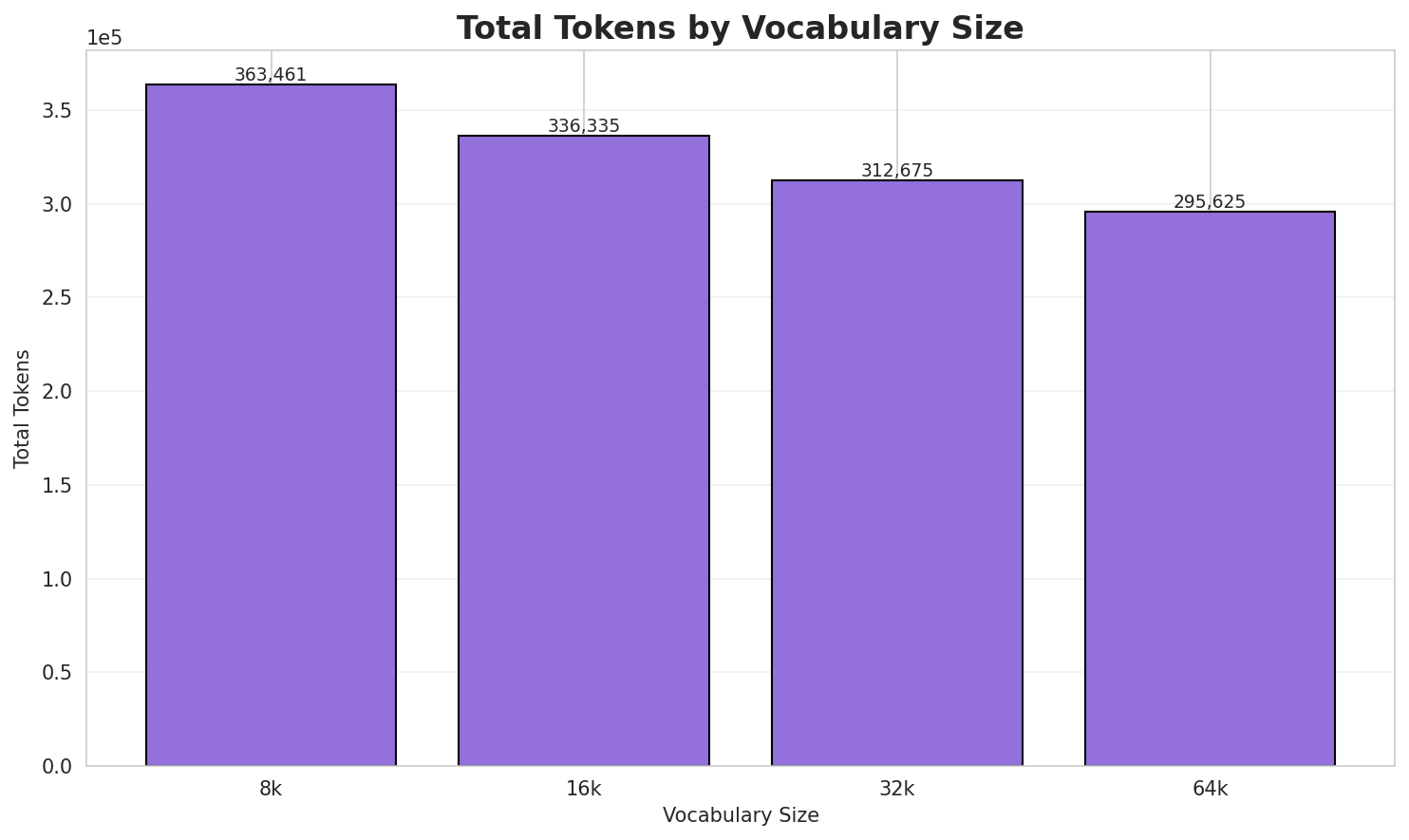

1. Tokenizer Evaluation

Results

| Vocab Size | Compression | Avg Token Len | UNK Rate | Total Tokens |

|---|---|---|---|---|

| 8k | 3.429x | 3.43 | 0.0264% | 363,461 |

| 16k | 3.706x | 3.71 | 0.0285% | 336,335 |

| 32k | 3.986x | 3.99 | 0.0307% | 312,675 |

| 64k | 4.216x 🏆 | 4.22 | 0.0325% | 295,625 |

Tokenization Examples

Below are sample sentences tokenized with each vocabulary size:

Sample 1: Ophrys splendida hè una pianta chì face partita di a famiglia di l'orchidaceae. ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁ophrys ▁sp len di da ▁hè ▁una ▁pianta ▁chì ▁face ... (+13 more) |

23 |

| 16k | ▁ophrys ▁splen di da ▁hè ▁una ▁pianta ▁chì ▁face ▁partita ... (+12 more) |

22 |

| 32k | ▁ophrys ▁splendi da ▁hè ▁una ▁pianta ▁chì ▁face ▁partita ▁di ... (+11 more) |

21 |

| 64k | ▁ophrys ▁splendida ▁hè ▁una ▁pianta ▁chì ▁face ▁partita ▁di ▁a ... (+10 more) |

20 |

Sample 2: U Mucale hè una cumuna di u dipartimentu di a Corsica suprana. Geografia Storia ...

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁u ▁mu cale ▁hè ▁una ▁cumuna ▁di ▁u ▁dipartimentu ▁di ... (+14 more) |

24 |

| 16k | ▁u ▁mu cale ▁hè ▁una ▁cumuna ▁di ▁u ▁dipartimentu ▁di ... (+14 more) |

24 |

| 32k | ▁u ▁mucale ▁hè ▁una ▁cumuna ▁di ▁u ▁dipartimentu ▁di ▁a ... (+13 more) |

23 |

| 64k | ▁u ▁mucale ▁hè ▁una ▁cumuna ▁di ▁u ▁dipartimentu ▁di ▁a ... (+13 more) |

23 |

Sample 3: L'Emilia è Romagna hè una regione taliana. taliana

| Vocab | Tokens | Count |

|---|---|---|

| 8k | ▁l ' e mi lia ▁è ▁roma gna ▁hè ▁una ... (+4 more) |

14 |

| 16k | ▁l ' emi lia ▁è ▁roma gna ▁hè ▁una ▁regione ... (+3 more) |

13 |

| 32k | ▁l ' emi lia ▁è ▁romagna ▁hè ▁una ▁regione ▁taliana ... (+2 more) |

12 |

| 64k | ▁l ' emilia ▁è ▁romagna ▁hè ▁una ▁regione ▁taliana . ... (+1 more) |

11 |

Key Findings

- Best Compression: 64k achieves 4.216x compression

- Lowest UNK Rate: 8k with 0.0264% unknown tokens

- Trade-off: Larger vocabularies improve compression but increase model size

- Recommendation: 32k vocabulary provides optimal balance for production use

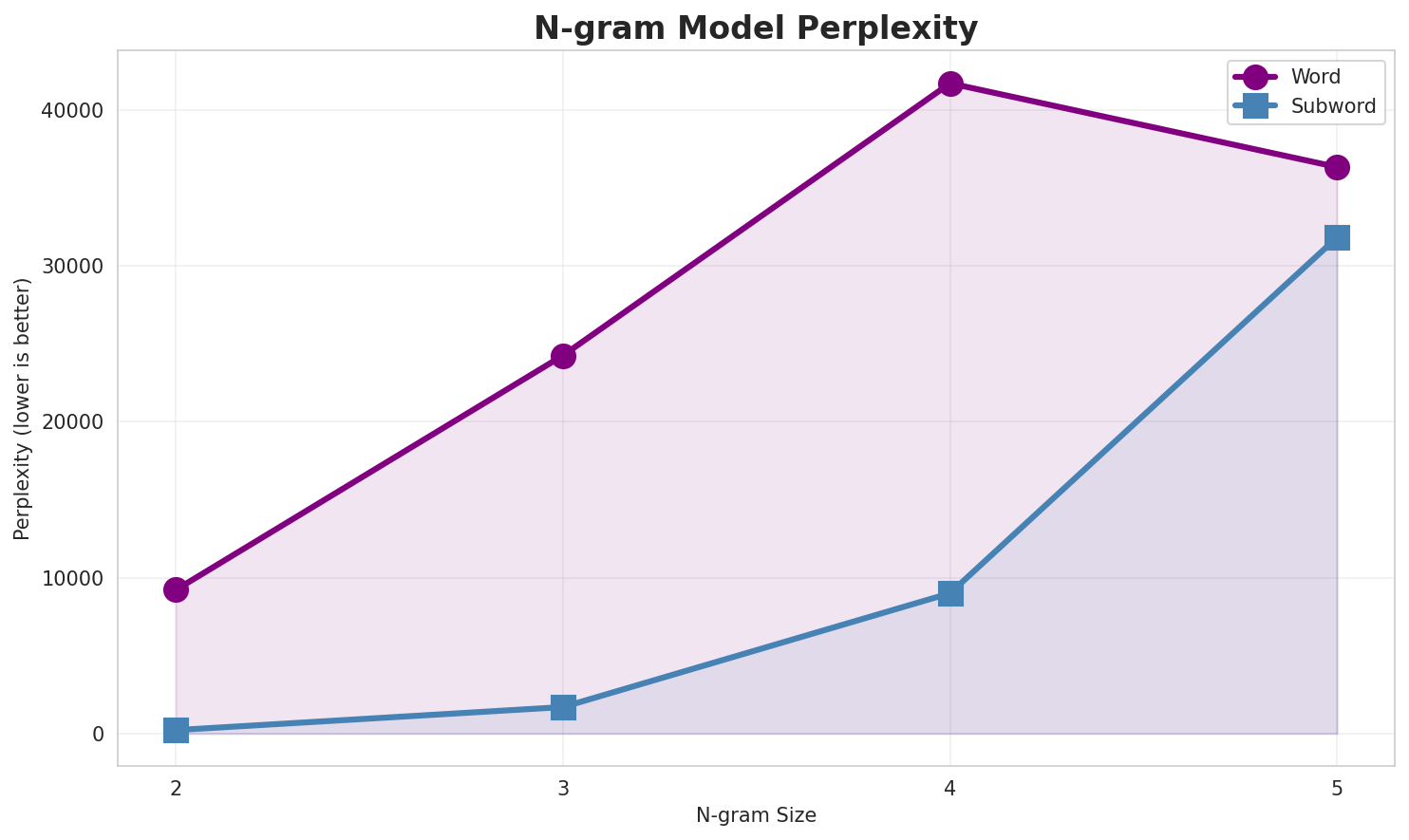

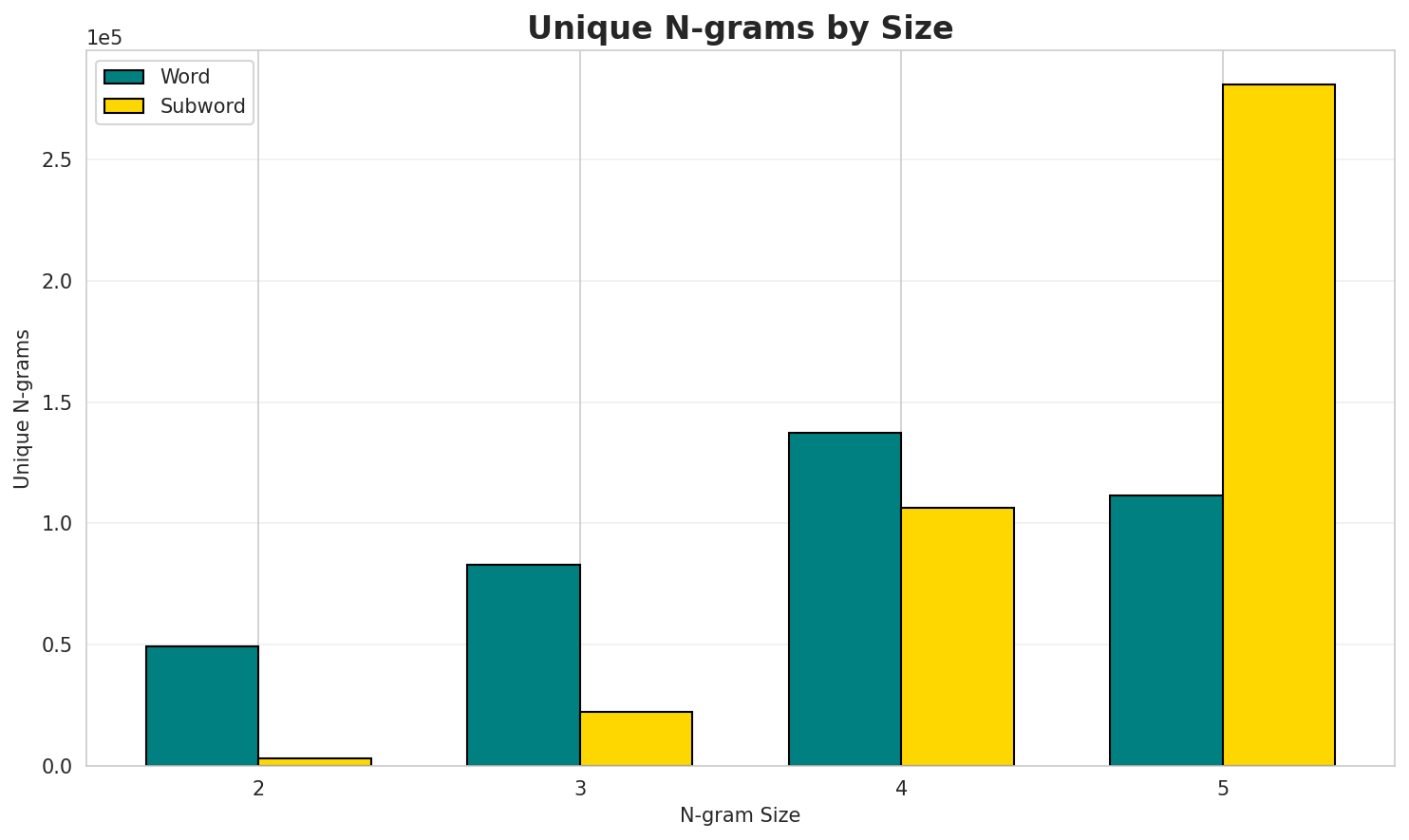

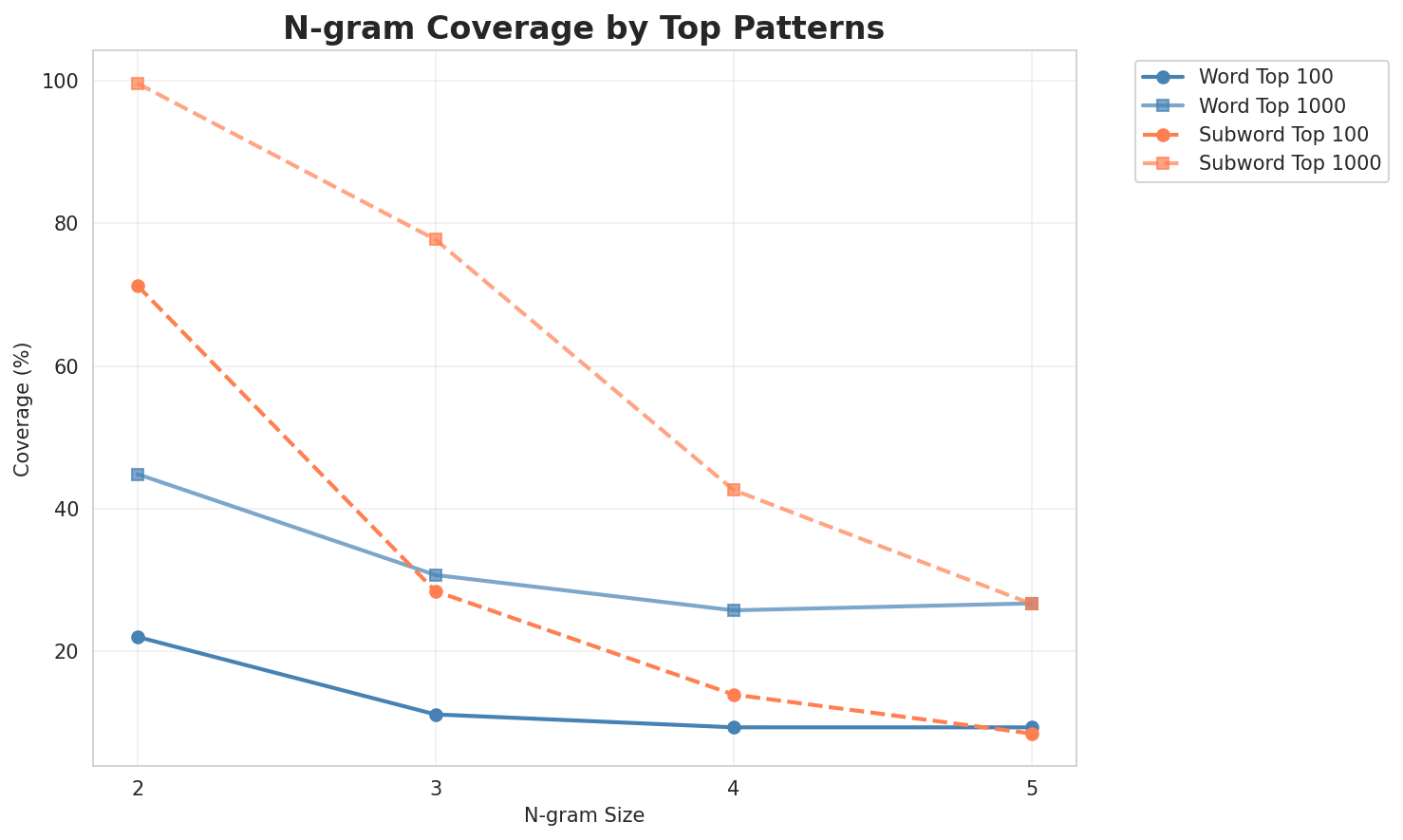

2. N-gram Model Evaluation

Results

| N-gram | Variant | Perplexity | Entropy | Unique N-grams | Top-100 Coverage | Top-1000 Coverage |

|---|---|---|---|---|---|---|

| 2-gram | Word | 9,217 | 13.17 | 49,361 | 22.0% | 44.8% |

| 2-gram | Subword | 220 🏆 | 7.78 | 3,170 | 71.3% | 99.6% |

| 3-gram | Word | 24,245 | 14.57 | 83,032 | 11.2% | 30.7% |

| 3-gram | Subword | 1,698 | 10.73 | 22,203 | 28.4% | 77.7% |

| 4-gram | Word | 41,699 | 15.35 | 137,212 | 9.3% | 25.7% |

| 4-gram | Subword | 9,000 | 13.14 | 106,299 | 13.9% | 42.6% |

| 5-gram | Word | 36,326 | 15.15 | 111,629 | 9.3% | 26.7% |

| 5-gram | Subword | 31,819 | 14.96 | 280,787 | 8.5% | 26.7% |

Top 5 N-grams by Size

2-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | di u |

18,692 |

| 2 | di a |

18,500 |

| 3 | di l |

13,231 |

| 4 | di i |

10,603 |

| 5 | à u |

9,233 |

3-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a famiglia di |

4,349 |

| 2 | hè una spezia |

3,359 |

| 3 | di a famiglia |

2,699 |

| 4 | hè una pianta |

2,612 |

| 5 | una spezia di |

2,290 |

4-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | di a famiglia di |

2,629 |

| 2 | a famiglia di i |

2,171 |

| 3 | hè una spezia di |

2,064 |

| 4 | annantu à wikimedia commons |

1,945 |

| 5 | à wikimedia commons di |

1,924 |

5-grams (Word):

| Rank | N-gram | Count |

|---|---|---|

| 1 | annantu à wikimedia commons di |

1,924 |

| 2 | à wikimedia commons di corsica |

1,923 |

| 3 | appartinendu à a famiglia di |

1,506 |

| 4 | flora corsica 2 ed edisud |

1,421 |

| 5 | d gamisans j flora corsica |

1,419 |

2-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | i _ |

432,205 |

| 2 | a _ |

403,888 |

| 3 | u _ |

315,849 |

| 4 | _ d |

246,098 |

| 5 | d i |

216,563 |

3-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d i |

172,754 |

| 2 | d i _ |

151,658 |

| 3 | _ i n |

82,722 |

| 4 | _ u _ |

81,534 |

| 5 | _ a _ |

73,027 |

4-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | _ d i _ |

143,050 |

| 2 | _ i n _ |

57,478 |

| 3 | a _ d i |

45,041 |

| 4 | _ h è _ |

45,025 |

| 5 | i _ d i |

35,043 |

5-grams (Subword):

| Rank | N-gram | Count |

|---|---|---|

| 1 | a _ d i _ |

37,617 |

| 2 | i _ d i _ |

29,786 |

| 3 | u _ d i _ |

28,746 |

| 4 | e _ d i _ |

24,400 |

| 5 | i o n e _ |

21,123 |

Key Findings

- Best Perplexity: 2-gram (subword) with 220

- Entropy Trend: Decreases with larger n-grams (more predictable)

- Coverage: Top-1000 patterns cover ~27% of corpus

- Recommendation: 4-gram or 5-gram for best predictive performance

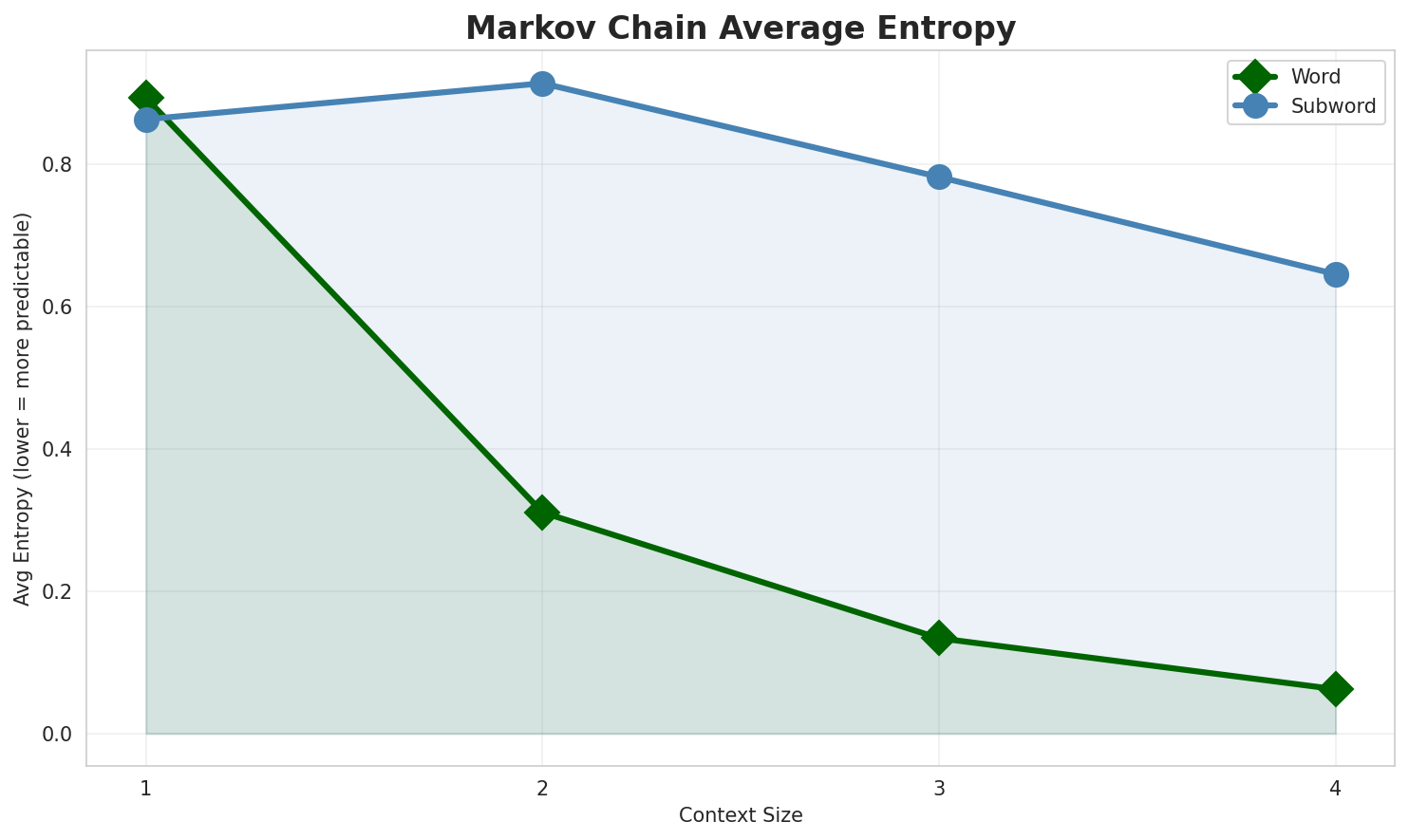

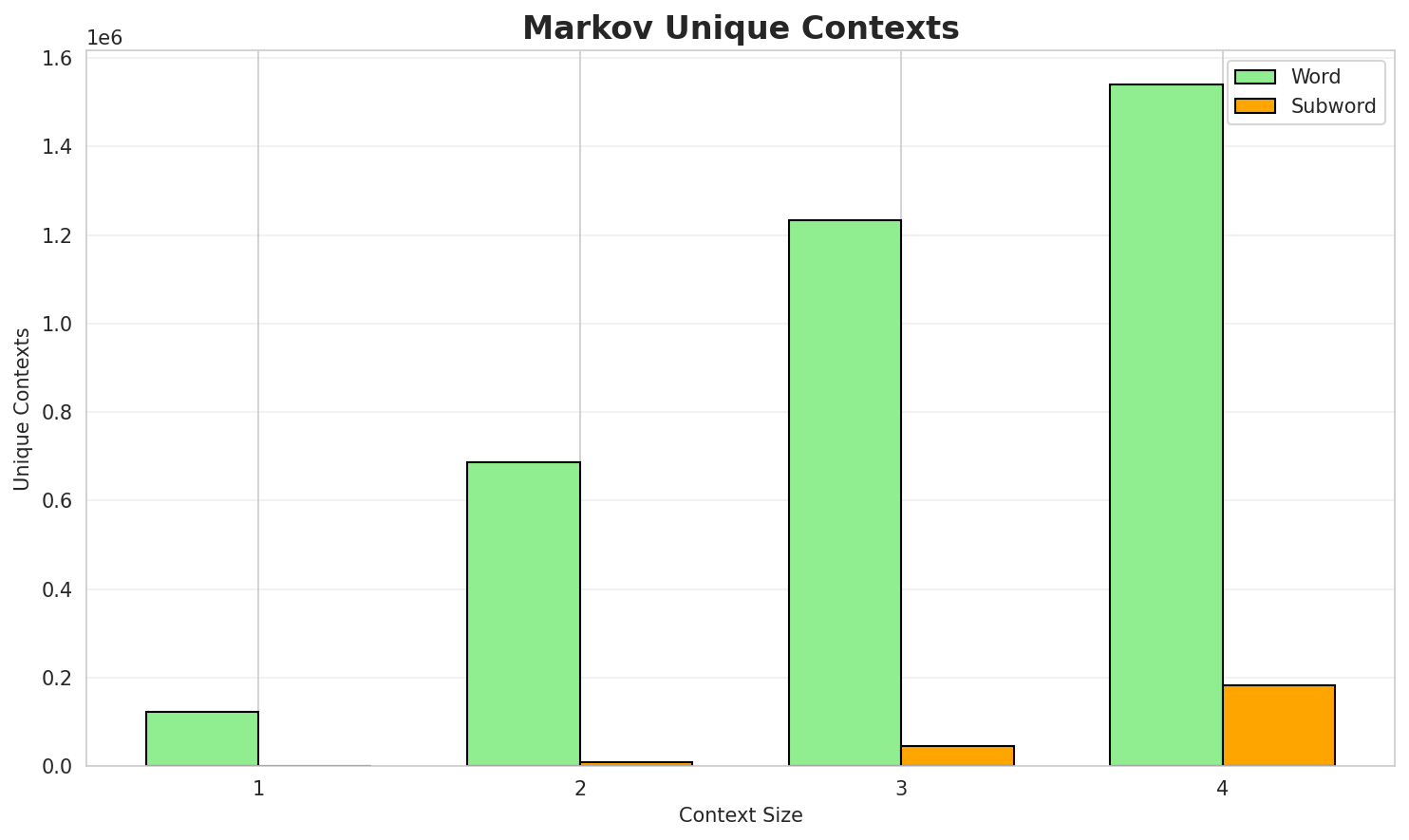

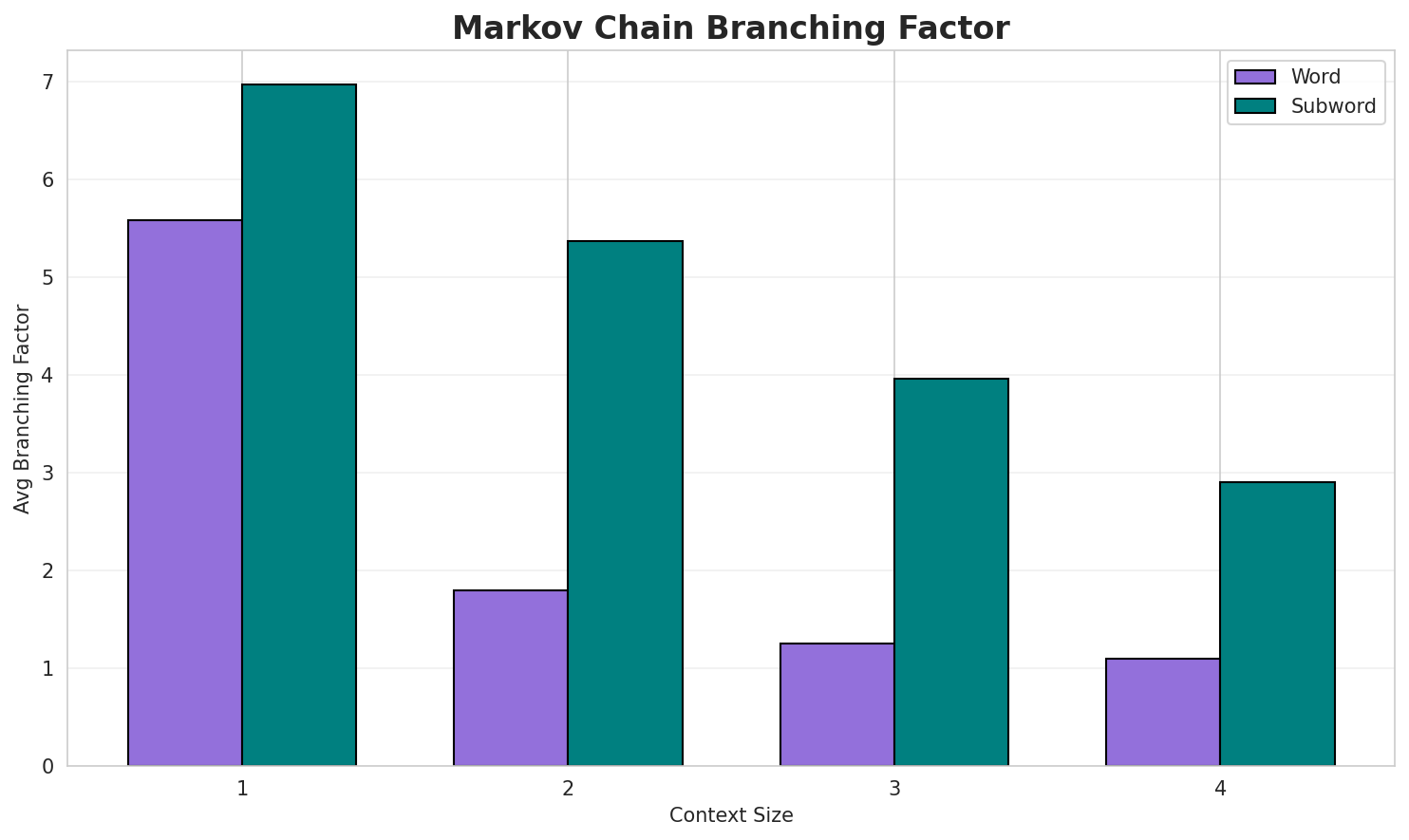

3. Markov Chain Evaluation

Results

| Context | Variant | Avg Entropy | Perplexity | Branching Factor | Unique Contexts | Predictability |

|---|---|---|---|---|---|---|

| 1 | Word | 0.8927 | 1.857 | 5.58 | 123,322 | 10.7% |

| 1 | Subword | 0.8627 | 1.818 | 6.97 | 1,238 | 13.7% |

| 2 | Word | 0.3106 | 1.240 | 1.80 | 686,898 | 68.9% |

| 2 | Subword | 0.9133 | 1.883 | 5.37 | 8,617 | 8.7% |

| 3 | Word | 0.1339 | 1.097 | 1.25 | 1,233,325 | 86.6% |

| 3 | Subword | 0.7817 | 1.719 | 3.96 | 46,221 | 21.8% |

| 4 | Word | 0.0623 🏆 | 1.044 | 1.10 | 1,539,570 | 93.8% |

| 4 | Subword | 0.6452 | 1.564 | 2.90 | 182,986 | 35.5% |

Generated Text Samples (Word-based)

Below are text samples generated from each word-based Markov chain model:

Context Size 1:

di tuda hè una spezia hè un missale rumanu mandatu pè a prutezzione di l isulau calendariu gregorianu evenimenti nascite morte celebrazione feste i primi cristiani è l euru e zon...a bellula chì faci cantà senza scoddhi e pratuline i bagni di 25 aprile di nettaru

Context Size 2:

di u mare à trasporti maritimi portivechju hà ancu statu cunnisciuta sottu u nomu simonu a casatadi a spagna un statu di spiritu turmintosa da veda dinò camisgia pilonu a camisgetta di corsicadi l europa occidentale di cipru di u bacinu mediterraniu induv ella hè ghjunta in alisgiani u

Context Size 3:

a famiglia di l orobanchaceae si distingui da i so grandi fiori gialli è arancini à forma dihè una spezia largamente sparta in a so aria di ripartizioni eppuri certi pupulazioni poni essa mina...di a famiglia di i brassicaceae si caratterizeghja da u so portu cispugliosu è cumpattu aghjunghjend...

Context Size 4:

di a famiglia di l arecaceae ed hè largamenti apprizzatu par a so biddezza è u so simbulu astrunomic...a famiglia di i sapindaceae discrizzioni l acer negundo hè un arburi scascianti chì pò aghjunghja un...hè una spezia di pianta chì faci parti di a famiglia di l hirundinidae descrizzione a rundinella cas...

Generated Text Samples (Subword-based)

Below are text samples generated from each subword-based Markov chain model:

Context Size 1:

_diri_25_à_di_d'iori_hà_siceisu_adia_puvezota_fi

Context Size 2:

i_re_culupula_à_sa_ufoltrupaticharu_à_ligna_culanea

Context Size 3:

_di_abbrunu,_catordi_arbaceae._nore__induv'eddu;_annan

Context Size 4:

_di_yprestitudi_à_s_in_amba_di_l'incena_di_l'aurolli_di_b

Key Findings

- Best Predictability: Context-4 (word) with 93.8% predictability

- Branching Factor: Decreases with context size (more deterministic)

- Memory Trade-off: Larger contexts require more storage (182,986 contexts)

- Recommendation: Context-3 or Context-4 for text generation

4. Vocabulary Analysis

Statistics

| Metric | Value |

|---|---|

| Vocabulary Size | 58,569 |

| Total Tokens | 2,191,854 |

| Mean Frequency | 37.42 |

| Median Frequency | 4 |

| Frequency Std Dev | 979.31 |

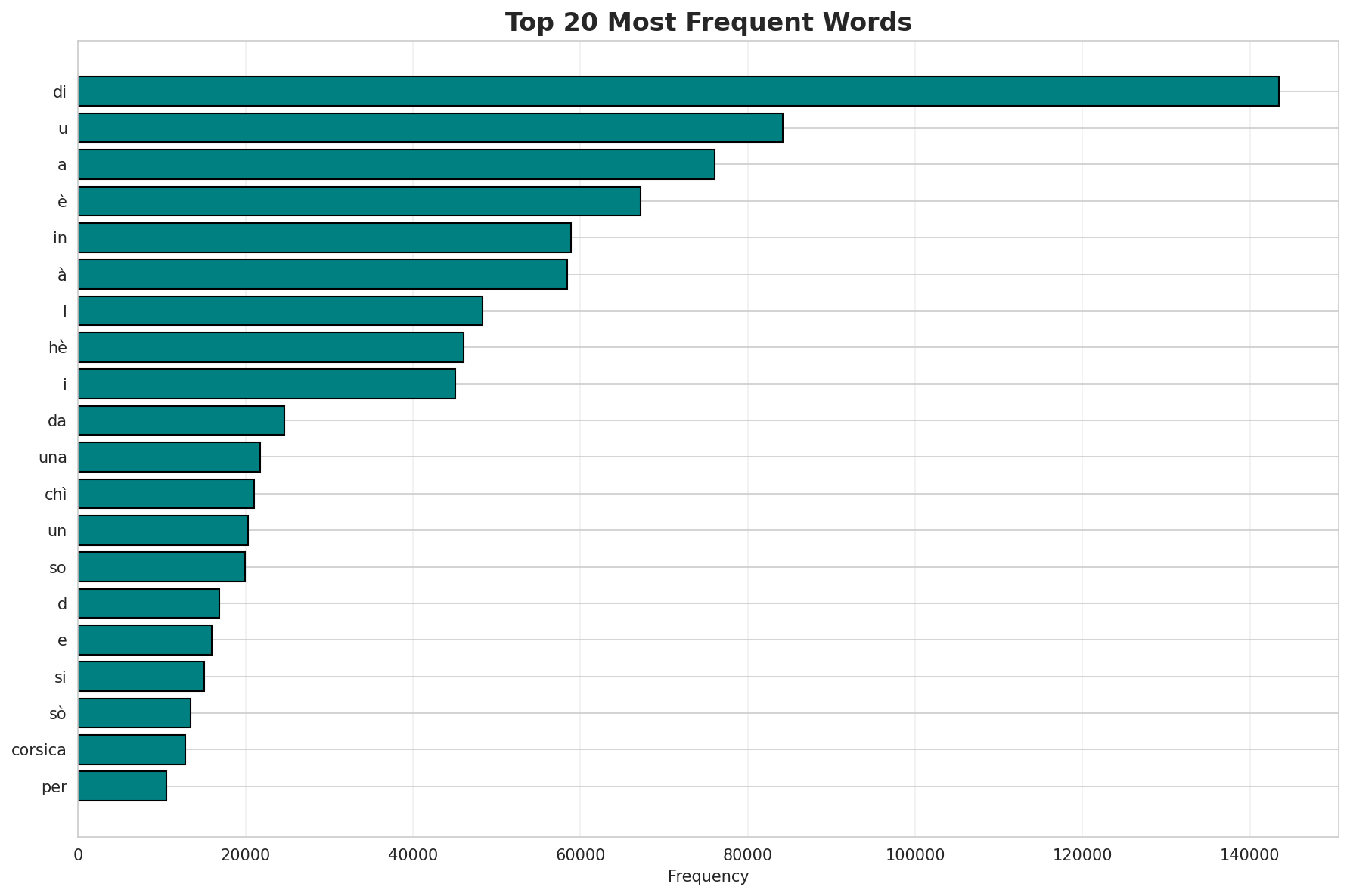

Most Common Words

| Rank | Word | Frequency |

|---|---|---|

| 1 | di | 143,436 |

| 2 | u | 84,175 |

| 3 | a | 76,019 |

| 4 | è | 67,153 |

| 5 | in | 58,881 |

| 6 | à | 58,439 |

| 7 | l | 48,309 |

| 8 | hè | 46,050 |

| 9 | i | 45,085 |

| 10 | da | 24,609 |

Least Common Words (from vocabulary)

| Rank | Word | Frequency |

|---|---|---|

| 1 | hannovra | 2 |

| 2 | multifau | 2 |

| 3 | vendanges | 2 |

| 4 | voceratrice | 2 |

| 5 | paysage | 2 |

| 6 | coin | 2 |

| 7 | paysan | 2 |

| 8 | spezialità | 2 |

| 9 | alerta | 2 |

| 10 | ꦈꦠꦩ | 2 |

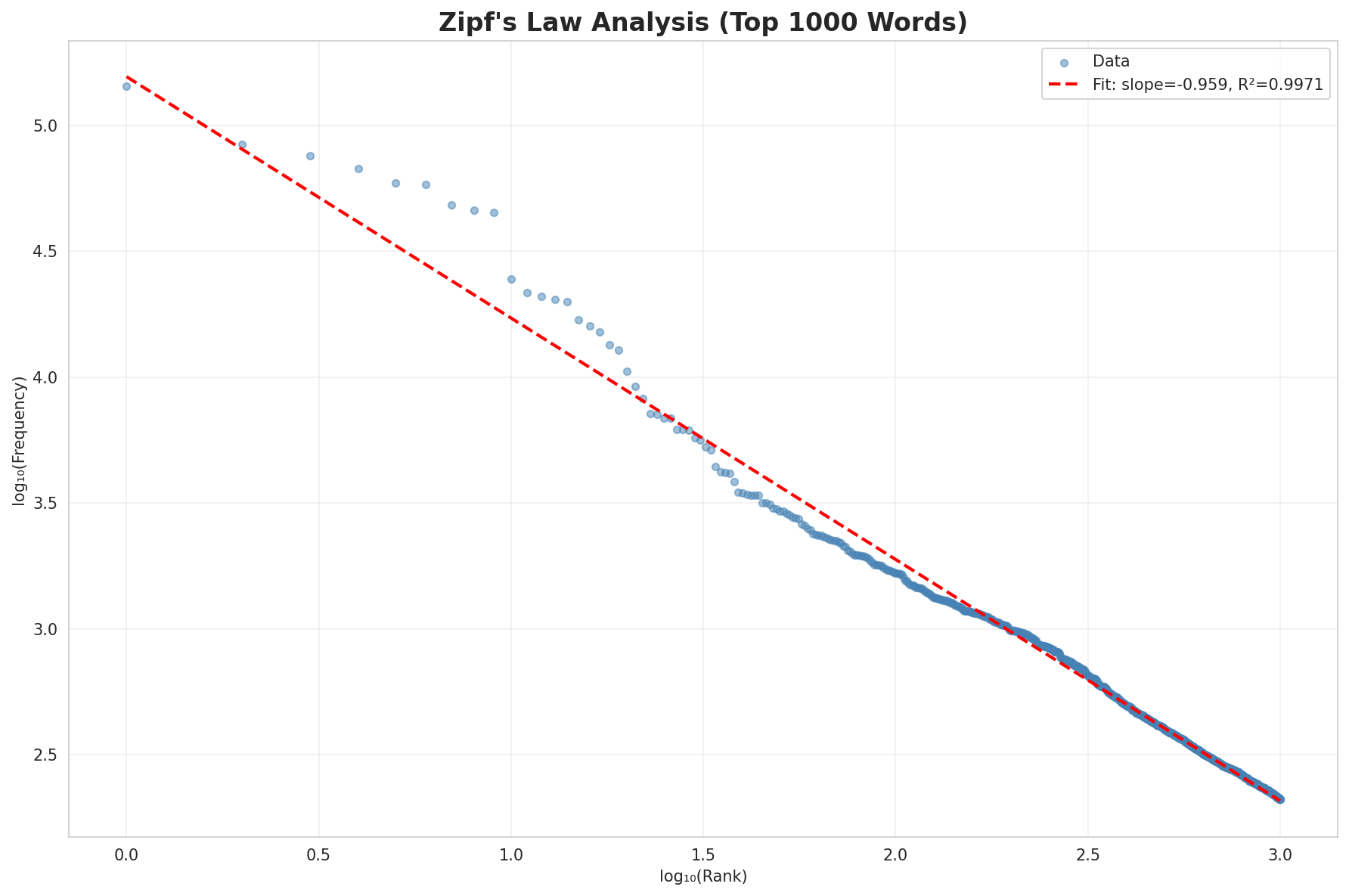

Zipf's Law Analysis

| Metric | Value |

|---|---|

| Zipf Coefficient | 1.0566 |

| R² (Goodness of Fit) | 0.997058 |

| Adherence Quality | excellent |

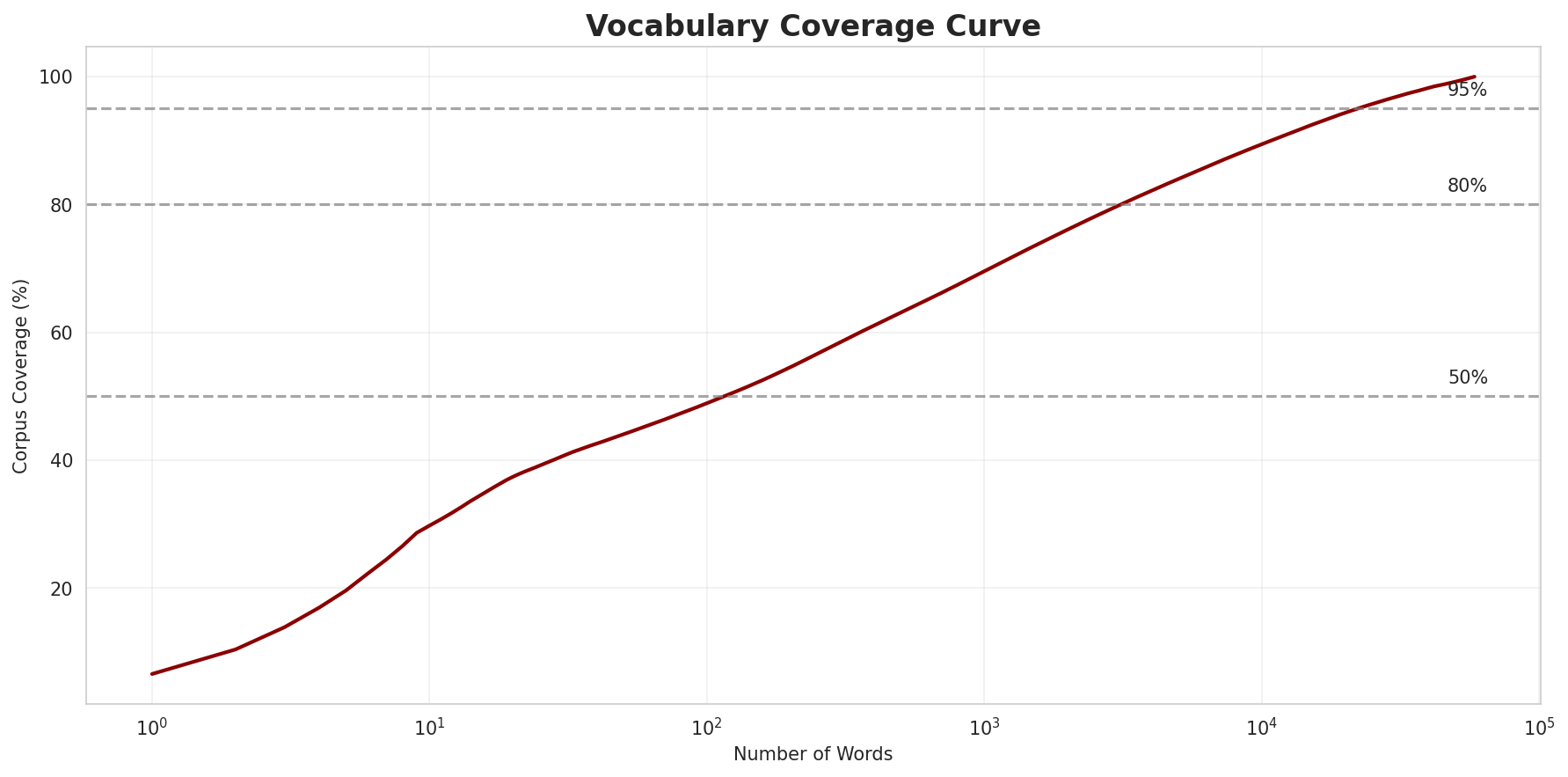

Coverage Analysis

| Top N Words | Coverage |

|---|---|

| Top 100 | 48.9% |

| Top 1,000 | 69.5% |

| Top 5,000 | 84.0% |

| Top 10,000 | 89.4% |

Key Findings

- Zipf Compliance: R²=0.9971 indicates excellent adherence to Zipf's law

- High Frequency Dominance: Top 100 words cover 48.9% of corpus

- Long Tail: 48,569 words needed for remaining 10.6% coverage

5. Word Embeddings Evaluation

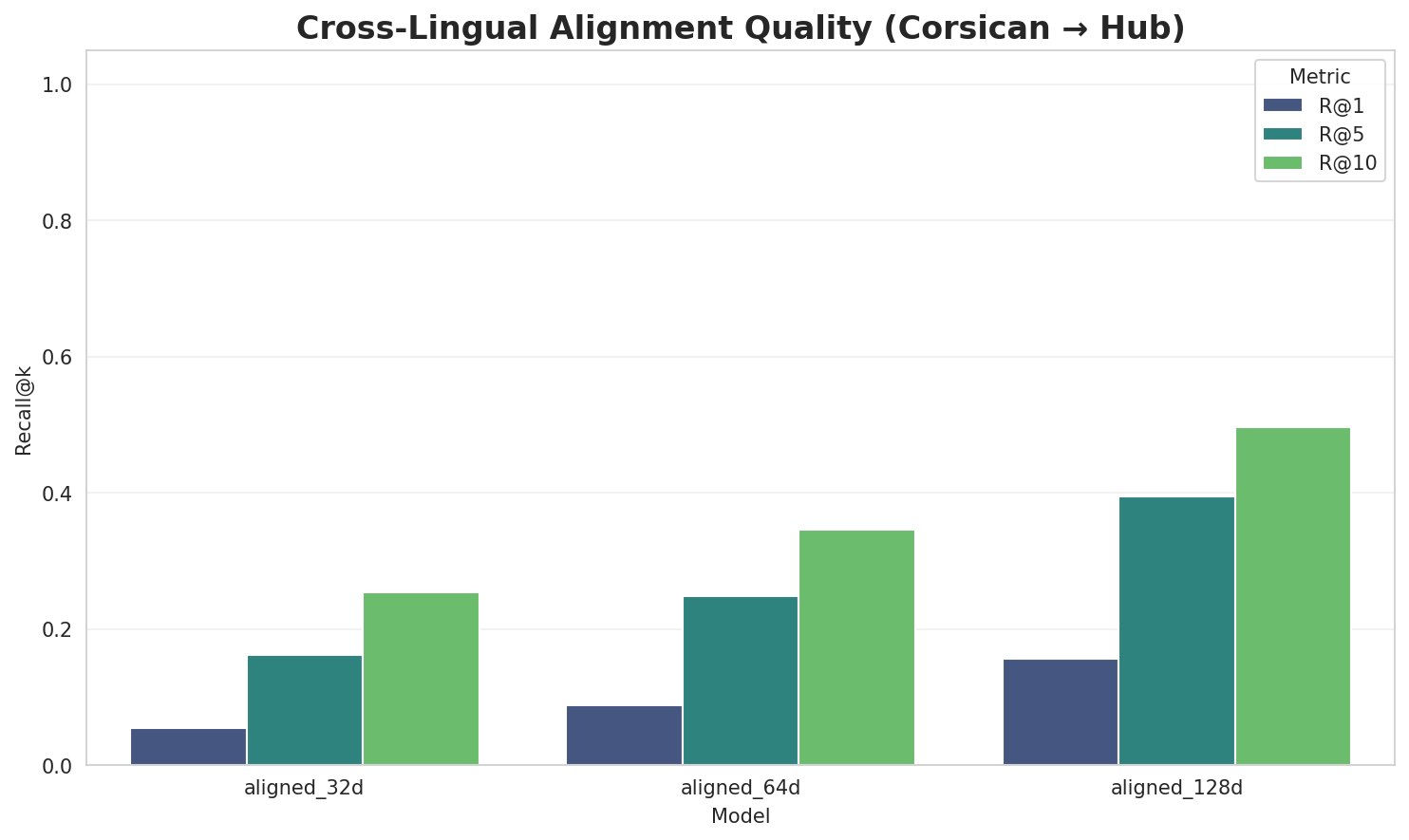

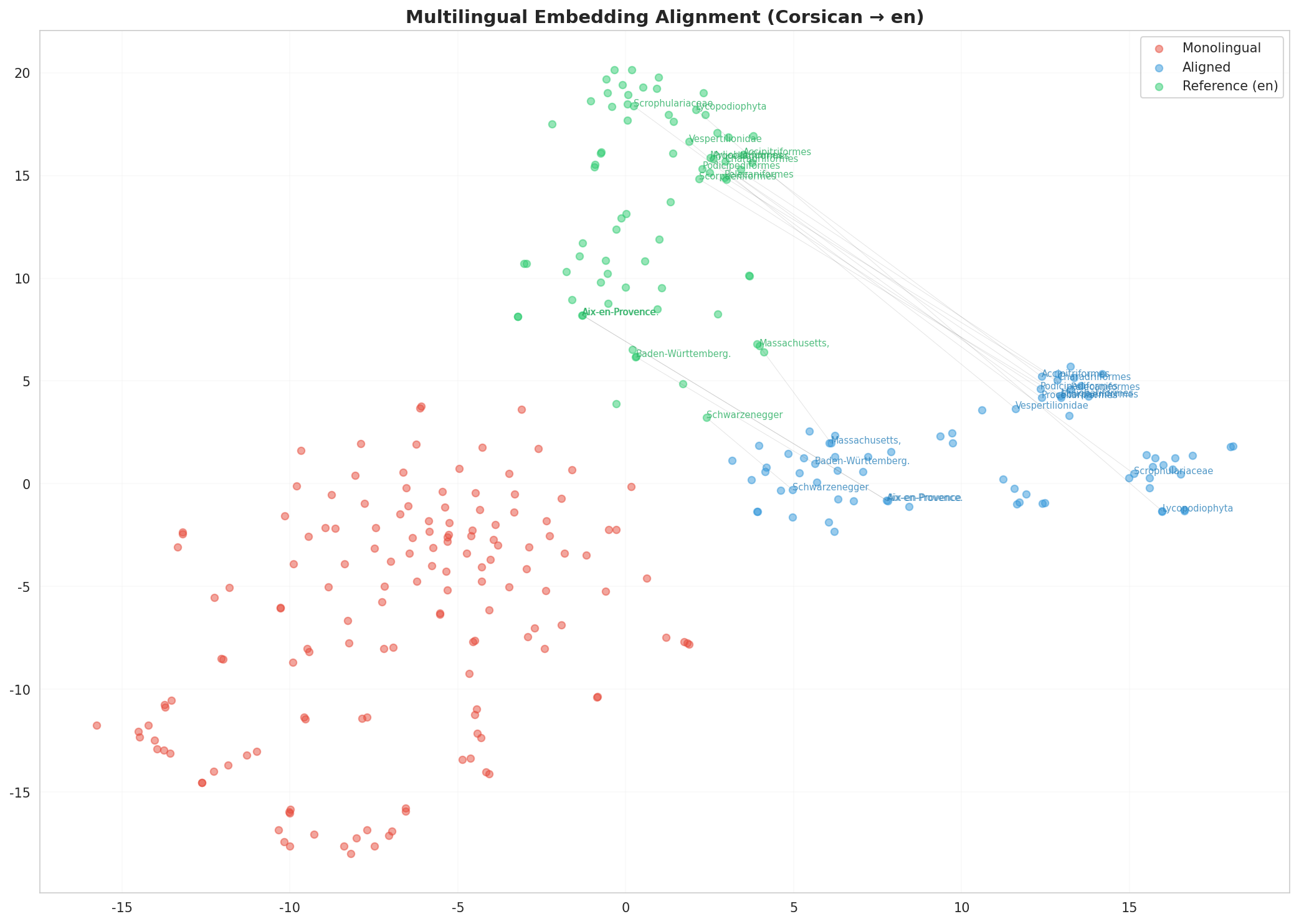

5.1 Cross-Lingual Alignment

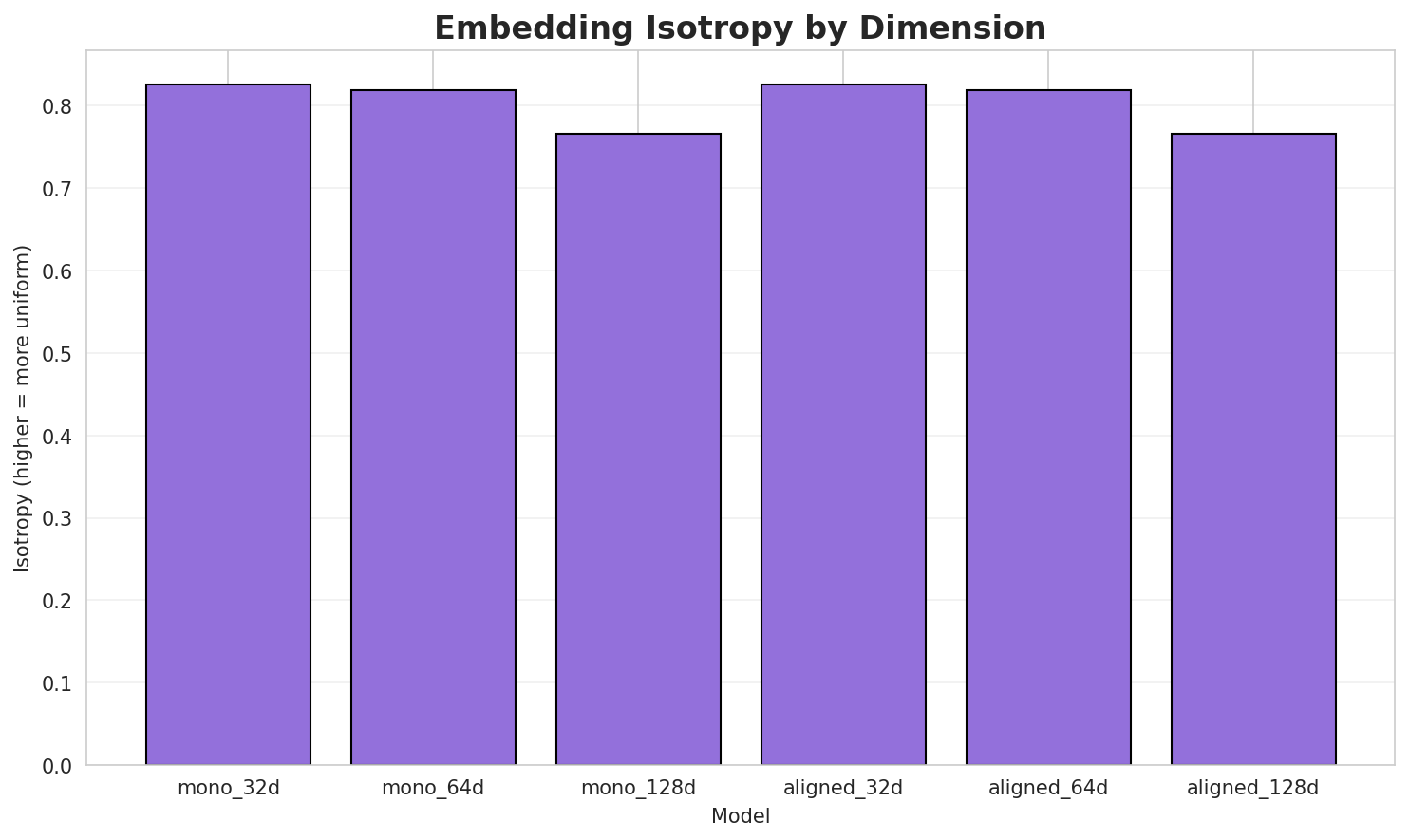

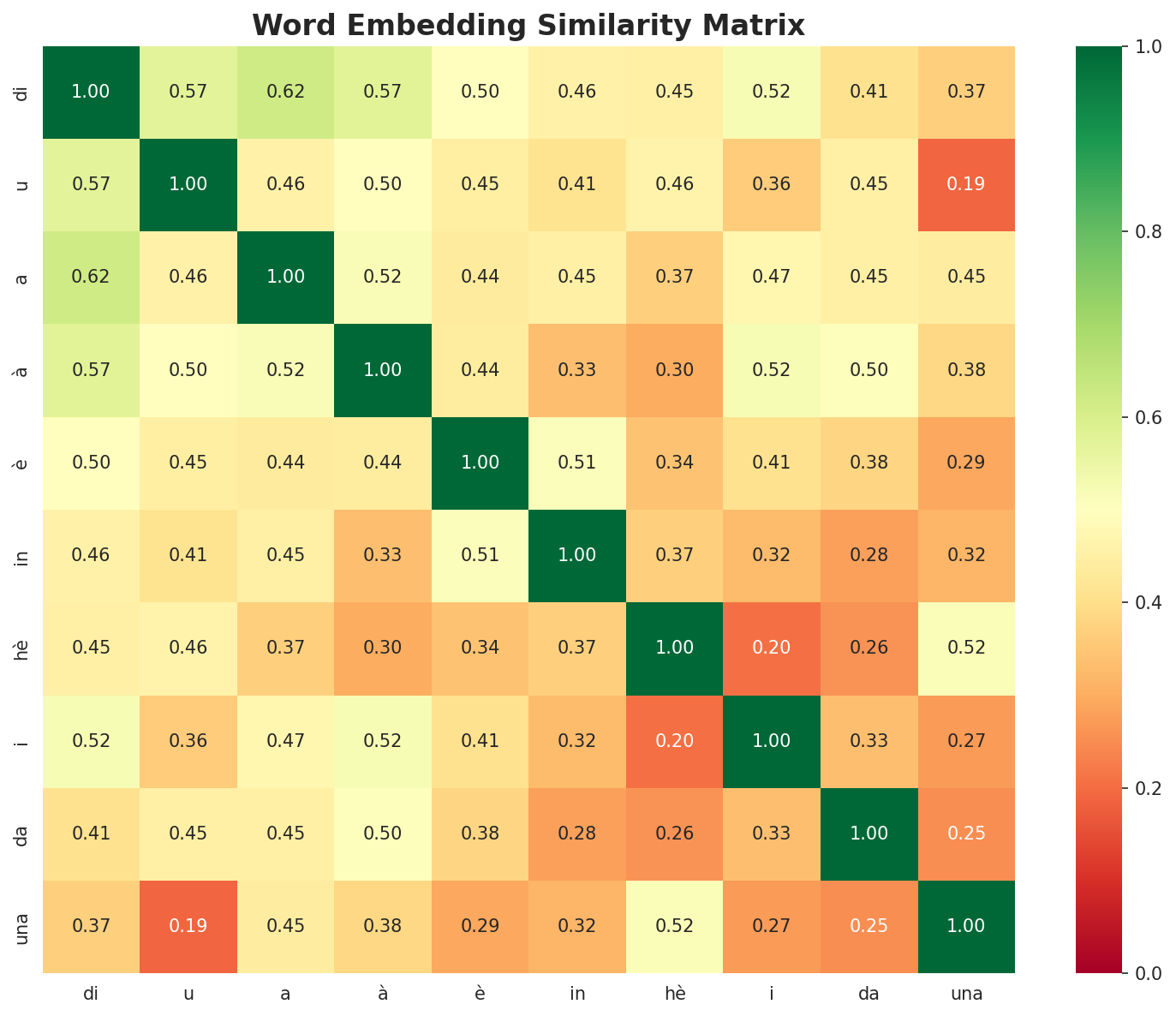

5.2 Model Comparison

| Model | Dimension | Isotropy | Semantic Density | Alignment R@1 | Alignment R@10 |

|---|---|---|---|---|---|

| mono_32d | 32 | 0.8262 🏆 | 0.3363 | N/A | N/A |

| mono_64d | 64 | 0.8192 | 0.2582 | N/A | N/A |

| mono_128d | 128 | 0.7654 | 0.2010 | N/A | N/A |

| aligned_32d | 32 | 0.8262 | 0.3340 | 0.0540 | 0.2540 |

| aligned_64d | 64 | 0.8192 | 0.2633 | 0.0880 | 0.3460 |

| aligned_128d | 128 | 0.7654 | 0.1975 | 0.1560 | 0.4960 |

Key Findings

- Best Isotropy: mono_32d with 0.8262 (more uniform distribution)

- Semantic Density: Average pairwise similarity of 0.2651. Lower values indicate better semantic separation.

- Alignment Quality: Aligned models achieve up to 15.6% R@1 in cross-lingual retrieval.

- Recommendation: 128d aligned for best cross-lingual performance

6. Morphological Analysis (Experimental)

This section presents an automated morphological analysis derived from the statistical divergence between word-level and subword-level models. By analyzing where subword predictability spikes and where word-level coverage fails, we can infer linguistic structures without supervised data.

6.1 Productivity & Complexity

| Metric | Value | Interpretation | Recommendation |

|---|---|---|---|

| Productivity Index | 5.000 | High morphological productivity | Reliable analysis |

| Idiomaticity Gap | 0.002 | Low formulaic content | - |

6.2 Affix Inventory (Productive Units)

These are the most productive prefixes and suffixes identified by sampling the vocabulary for global substitutability patterns. A unit is considered an affix if stripping it leaves a valid stem that appears in other contexts.

Productive Prefixes

| Prefix | Examples |

|---|---|

-cu |

cunfutà, cuddazioni, cuntera |

-ca |

castres, caprimulgus, calciu |

-ri |

rivede, rispettà, riurganizò |

-in |

ingegneri, incausà, indì |

-pr |

pridatori, privileghju, preferisci |

-di |

dinastìa, disintegra, dicennovi |

Productive Suffixes

| Suffix | Examples |

|---|---|

-i |

addevi, ingegneri, midianti |

-u |

spagnolu, belgiu, vòtu |

-a |

dinastìa, leucoraja, seduta |

-e |

rivede, uccidentale, marginale |

-tu |

vòtu, validatu, prisirvatu |

-ti |

midianti, rapprisintati, sminticati |

-ni |

cuddazioni, vogliini, cardini |

-ta |

seduta, atalanta, rota |

6.3 Bound Stems (Lexical Roots)

Bound stems are high-frequency subword units that are semantically cohesive but rarely appear as standalone words. These often correspond to the 'core' of a word that requires inflection or derivation to be valid.

| Stem | Cohesion | Substitutability | Examples |

|---|---|---|---|

endu |

2.14x | 73 contexts | fendu, vendu, dendu |

enti |

1.81x | 118 contexts | nenti, denti, lenti |

igli |

1.63x | 112 contexts | gigli, migli, cigli |

aghj |

1.46x | 142 contexts | aghji, aghju, aghja |

glia |

1.66x | 70 contexts | aglia, paglia, figlia |

azio |

1.75x | 56 contexts | tazio, lazio, orazio |

zion |

1.65x | 64 contexts | azione, nozione, lezioni |

ment |

1.48x | 87 contexts | mente, menti, menta |

cors |

1.80x | 33 contexts | corso, corsa, corse |

ific |

1.57x | 45 contexts | pacific, unificò, unificà |

tura |

1.38x | 62 contexts | datura, altura, natura |

sica |

1.56x | 37 contexts | mùsica, fìsica, sicani |

6.4 Affix Compatibility (Co-occurrence)

This table shows which prefixes and suffixes most frequently co-occur on the same stems, revealing the 'stacking' rules of the language's morphology.

| Prefix | Suffix | Frequency | Examples |

|---|---|---|---|

-cu |

-i |

84 words | curteghji, cubiti |

-cu |

-u |

82 words | cuntestatu, cunvertitu |

-ri |

-u |

67 words | righjistru, riguardu |

-cu |

-a |

64 words | cultelleria, cunsacra |

-cu |

-e |

62 words | cundannate, cunstruzione |

-in |

-u |

61 words | ingombru, inchietu |

-ca |

-a |

59 words | calandra, cantata |

-in |

-i |

58 words | insufficienti, intarsizioni |

-ca |

-u |

58 words | caratteru, capistranu |

-pr |

-i |

56 words | preparazioni, prisintati |

6.5 Recursive Morpheme Segmentation

Using Recursive Hierarchical Substitutability, we decompose complex words into their constituent morphemes. This approach handles nested affixes (e.g., prefix-prefix-root-suffix).

| Word | Suggested Split | Confidence | Stem |

|---|---|---|---|

| indibulitu | in-di-buli-tu |

7.5 | buli |

| dirighjitu | di-ri-ghji-tu |

7.5 | ghji |

| dimustrati | di-mustra-ti |

6.0 | mustra |

| ricustruisce | ri-cu-struisce |

6.0 | struisce |

| ricustruite | ri-cu-struite |

6.0 | struite |

| saturnianu | saturn-ia-nu |

6.0 | saturn |

| rivoltani | ri-volta-ni |

6.0 | volta |

| divenendu | di-venendu |

4.5 | venendu |

| indicheghjanu | in-di-cheghja-nu |

4.5 | cheghja |

| accupavanu | accupava-nu |

4.5 | accupava |

| granulita | granuli-ta |

4.5 | granuli |

| principionu | pr-in-cipio-nu |

4.5 | cipio |

| attaccani | attacca-ni |

4.5 | attacca |

| supranatu | suprana-tu |

4.5 | suprana |

| asciuvatu | asciuva-tu |

4.5 | asciuva |

6.6 Linguistic Interpretation

Automated Insight: The language Corsican shows high morphological productivity. The subword models are significantly more efficient than word models, suggesting a rich system of affixation or compounding.

7. Summary & Recommendations

Production Recommendations

| Component | Recommended | Rationale |

|---|---|---|

| Tokenizer | 64k BPE | Best compression (4.22x) |

| N-gram | 2-gram | Lowest perplexity (220) |

| Markov | Context-4 | Highest predictability (93.8%) |

| Embeddings | 100d | Balanced semantic capture and isotropy |

Appendix: Metrics Glossary & Interpretation Guide

This section provides definitions, intuitions, and guidance for interpreting the metrics used throughout this report.

Tokenizer Metrics

Compression Ratio

Definition: The ratio of characters to tokens (chars/token). Measures how efficiently the tokenizer represents text.

Intuition: Higher compression means fewer tokens needed to represent the same text, reducing sequence lengths for downstream models. A 3x compression means ~3 characters per token on average.

What to seek: Higher is generally better for efficiency, but extremely high compression may indicate overly aggressive merging that loses morphological information.

Average Token Length (Fertility)

Definition: Mean number of characters per token produced by the tokenizer.

Intuition: Reflects the granularity of tokenization. Longer tokens capture more context but may struggle with rare words; shorter tokens are more flexible but increase sequence length.

What to seek: Balance between 2-5 characters for most languages. Arabic/morphologically-rich languages may benefit from slightly longer tokens.

Unknown Token Rate (OOV Rate)

Definition: Percentage of tokens that map to the unknown/UNK token, indicating words the tokenizer cannot represent.

Intuition: Lower OOV means better vocabulary coverage. High OOV indicates the tokenizer encounters many unseen character sequences.

What to seek: Below 1% is excellent; below 5% is acceptable. BPE tokenizers typically achieve very low OOV due to subword fallback.

N-gram Model Metrics

Perplexity

Definition: Measures how "surprised" the model is by test data. Mathematically: 2^(cross-entropy). Lower values indicate better prediction.

Intuition: If perplexity is 100, the model is as uncertain as if choosing uniformly among 100 options at each step. A perplexity of 10 means effectively choosing among 10 equally likely options.

What to seek: Lower is better. Perplexity decreases with larger n-grams (more context). Values vary widely by language and corpus size.

Entropy

Definition: Average information content (in bits) needed to encode the next token given the context. Related to perplexity: perplexity = 2^entropy.

Intuition: High entropy means high uncertainty/randomness; low entropy means predictable patterns. Natural language typically has entropy between 1-4 bits per character.

What to seek: Lower entropy indicates more predictable text patterns. Entropy should decrease as n-gram size increases.

Coverage (Top-K)

Definition: Percentage of corpus occurrences explained by the top K most frequent n-grams.

Intuition: High coverage with few patterns indicates repetitive/formulaic text; low coverage suggests diverse vocabulary usage.

What to seek: Depends on use case. For language modeling, moderate coverage (40-60% with top-1000) is typical for natural text.

Markov Chain Metrics

Average Entropy

Definition: Mean entropy across all contexts, measuring average uncertainty in next-word prediction.

Intuition: Lower entropy means the model is more confident about what comes next. Context-1 has high entropy (many possible next words); Context-4 has low entropy (few likely continuations).

What to seek: Decreasing entropy with larger context sizes. Very low entropy (<0.1) indicates highly deterministic transitions.

Branching Factor

Definition: Average number of unique next tokens observed for each context.

Intuition: High branching = many possible continuations (flexible but uncertain); low branching = few options (predictable but potentially repetitive).

What to seek: Branching factor should decrease with context size. Values near 1.0 indicate nearly deterministic chains.

Predictability

Definition: Derived metric: (1 - normalized_entropy) × 100%. Indicates how deterministic the model's predictions are.

Intuition: 100% predictability means the next word is always certain; 0% means completely random. Real text falls between these extremes.

What to seek: Higher predictability for text generation quality, but too high (>98%) may produce repetitive output.

Vocabulary & Zipf's Law Metrics

Zipf's Coefficient

Definition: The slope of the log-log plot of word frequency vs. rank. Zipf's law predicts this should be approximately -1.

Intuition: A coefficient near -1 indicates the corpus follows natural language patterns where a few words are very common and most words are rare.

What to seek: Values between -0.8 and -1.2 indicate healthy natural language distribution. Deviations may suggest domain-specific or artificial text.

R² (Coefficient of Determination)

Definition: Measures how well the linear fit explains the frequency-rank relationship. Ranges from 0 to 1.

Intuition: R² near 1.0 means the data closely follows Zipf's law; lower values indicate deviation from expected word frequency patterns.

What to seek: R² > 0.95 is excellent; > 0.99 indicates near-perfect Zipf adherence typical of large natural corpora.

Vocabulary Coverage

Definition: Cumulative percentage of corpus tokens accounted for by the top N words.

Intuition: Shows how concentrated word usage is. If top-100 words cover 50% of text, the corpus relies heavily on common words.

What to seek: Top-100 covering 30-50% is typical. Higher coverage indicates more repetitive text; lower suggests richer vocabulary.

Word Embedding Metrics

Isotropy

Definition: Measures how uniformly distributed vectors are in the embedding space. Computed as the ratio of minimum to maximum singular values.

Intuition: High isotropy (near 1.0) means vectors spread evenly in all directions; low isotropy means vectors cluster in certain directions, reducing expressiveness.

What to seek: Higher isotropy generally indicates better-quality embeddings. Values > 0.1 are reasonable; > 0.3 is good. Lower-dimensional embeddings tend to have higher isotropy.

Average Norm

Definition: Mean magnitude (L2 norm) of word vectors in the embedding space.

Intuition: Indicates the typical "length" of vectors. Consistent norms suggest stable training; high variance may indicate some words are undertrained.

What to seek: Relatively consistent norms across models. The absolute value matters less than consistency (low std deviation).

Cosine Similarity

Definition: Measures angular similarity between vectors, ranging from -1 (opposite) to 1 (identical direction).

Intuition: Words with similar meanings should have high cosine similarity. This is the standard metric for semantic relatedness in embeddings.

What to seek: Semantically related words should score > 0.5; unrelated words should be near 0. Synonyms often score > 0.7.

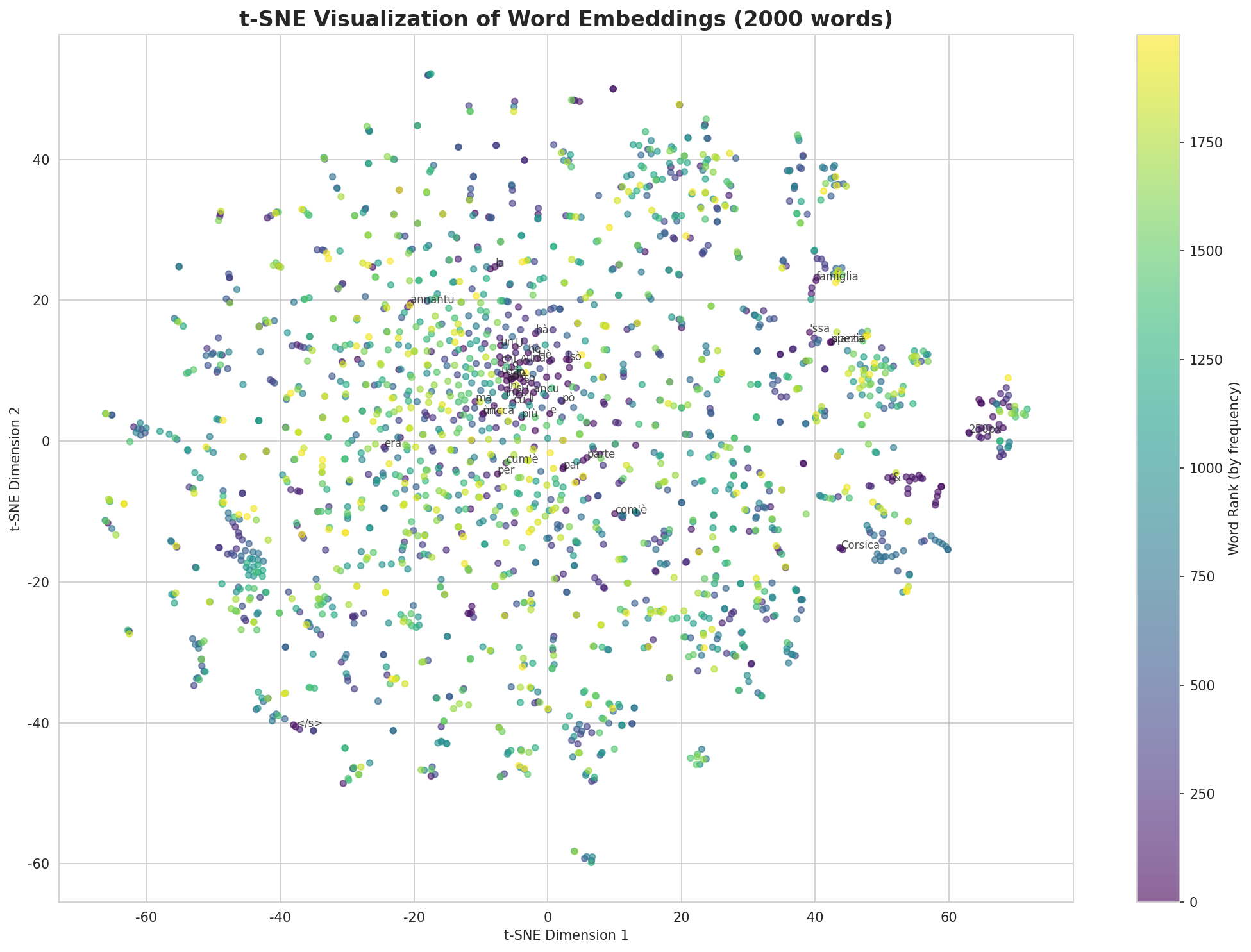

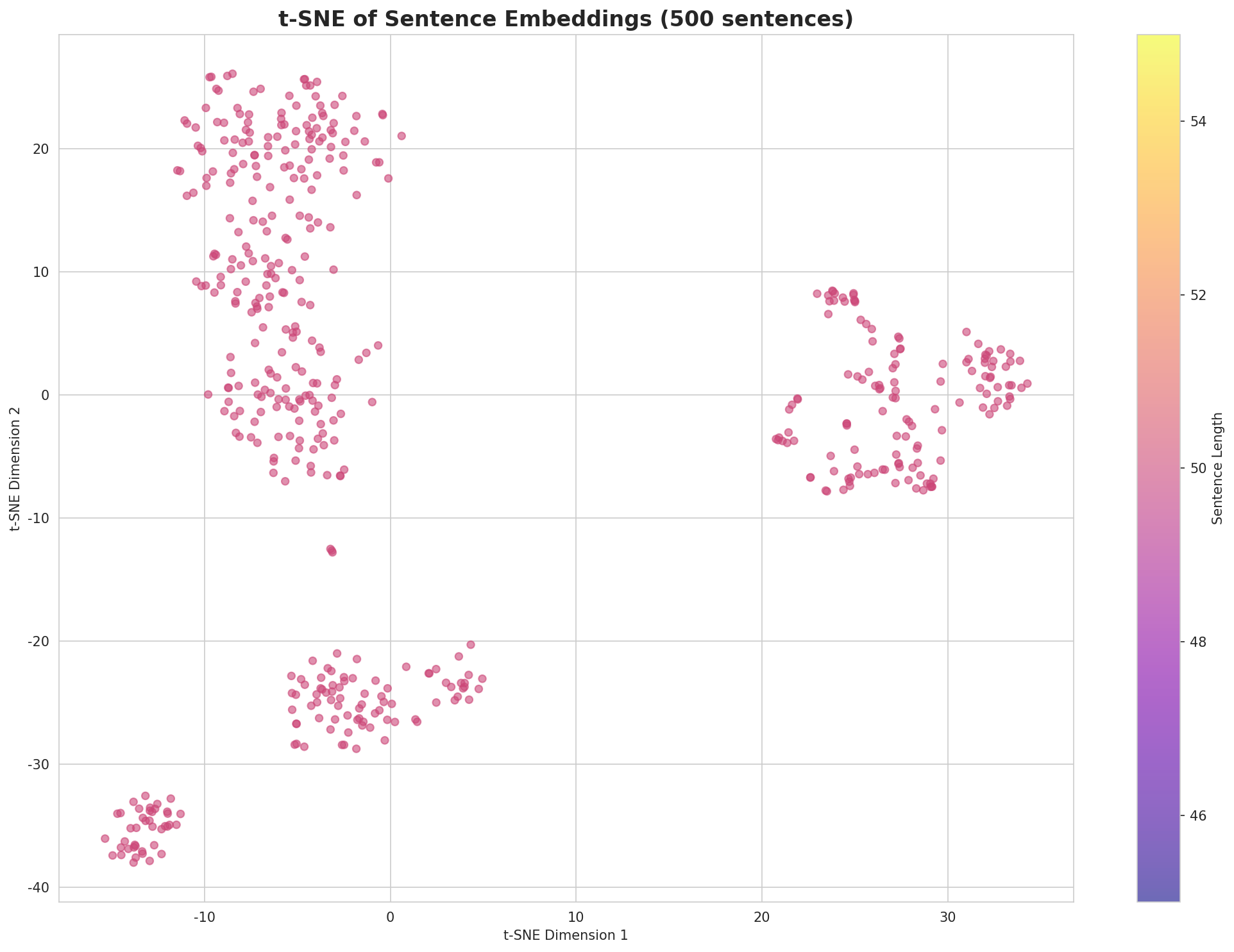

t-SNE Visualization

Definition: t-Distributed Stochastic Neighbor Embedding - a dimensionality reduction technique that preserves local structure for visualization.

Intuition: Clusters in t-SNE plots indicate groups of semantically related words. Spread indicates vocabulary diversity; tight clusters suggest semantic coherence.

What to seek: Meaningful clusters (e.g., numbers together, verbs together). Avoid over-interpreting distances - t-SNE preserves local, not global, structure.

General Interpretation Guidelines

- Compare within model families: Metrics are most meaningful when comparing models of the same type (e.g., 8k vs 64k tokenizer).

- Consider trade-offs: Better performance on one metric often comes at the cost of another (e.g., compression vs. OOV rate).

- Context matters: Optimal values depend on downstream tasks. Text generation may prioritize different metrics than classification.

- Corpus influence: All metrics are influenced by corpus characteristics. Wikipedia text differs from social media or literature.

- Language-specific patterns: Morphologically rich languages (like Arabic) may show different optimal ranges than analytic languages.

Visualizations Index

| Visualization | Description |

|---|---|

| Tokenizer Compression | Compression ratios by vocabulary size |

| Tokenizer Fertility | Average token length by vocabulary |

| Tokenizer OOV | Unknown token rates |

| Tokenizer Total Tokens | Total tokens by vocabulary |

| N-gram Perplexity | Perplexity by n-gram size |

| N-gram Entropy | Entropy by n-gram size |

| N-gram Coverage | Top pattern coverage |

| N-gram Unique | Unique n-gram counts |

| Markov Entropy | Entropy by context size |

| Markov Branching | Branching factor by context |

| Markov Contexts | Unique context counts |

| Zipf's Law | Frequency-rank distribution with fit |

| Vocab Frequency | Word frequency distribution |

| Top 20 Words | Most frequent words |

| Vocab Coverage | Cumulative coverage curve |

| Embedding Isotropy | Vector space uniformity |

| Embedding Norms | Vector magnitude distribution |

| Embedding Similarity | Word similarity heatmap |

| Nearest Neighbors | Similar words for key terms |

| t-SNE Words | 2D word embedding visualization |

| t-SNE Sentences | 2D sentence embedding visualization |

| Position Encoding | Encoding method comparison |

| Model Sizes | Storage requirements |

| Performance Dashboard | Comprehensive performance overview |

About This Project

Data Source

Models trained on wikipedia-monthly - a monthly snapshot of Wikipedia articles across 300+ languages.

Project

A project by Wikilangs - Open-source NLP models for every Wikipedia language.

Maintainer

Citation

If you use these models in your research, please cite:

@misc{wikilangs2025,

author = {Kamali, Omar},

title = {Wikilangs: Open NLP Models for Wikipedia Languages},

year = {2025},

doi = {10.5281/zenodo.18073153},

publisher = {Zenodo},

url = {https://huggingface.co/wikilangs}

institution = {Omneity Labs}

}

License

MIT License - Free for academic and commercial use.

Links

- 🌐 Website: wikilangs.org

- 🤗 Models: huggingface.co/wikilangs

- 📊 Data: wikipedia-monthly

- 👤 Author: Omar Kamali

- 🤝 Sponsor: Featherless AI

Generated by Wikilangs Models Pipeline

Report Date: 2026-01-03 20:37:45